Validating Nokia's IP Routing & Mobile Gateway VNFs

Two of Nokia's key virtual network functions (VNFs) have been put through their paces by independent test lab EANTC – here are the results.

February 9, 2016

Network operators are facing some of the biggest technology decisions in their history and the choices they make will have a major impact on their future prosperity.

They need to decide which parts of their network architecture should be virtualized (and when) in order to become more efficient, develop new business opportunities and gain competitive advantages, yet without losing functionality or degrading performance.

These New IP-related decisions are made harder by the nature of the virtual network functions (VNFs) under consideration -- they are first-generation products that have no documented deployment track record.

That's why the independent verification of VNFs is so important to network planners and purse string holders: They need to know that any virtualization technology in which they're going to invest resources (time, people and money) is carrier grade -- fit for purpose.

As a result, independent evaluations by trusted and experienced third-party test organizations are absolutely critical to the operators' decision-making processes, as they can expedite the early, important phases of a transformation program.

That's why this test report is so important.

It provides detailed insight into the performance and scalability tests, conducted by independent test lab European Advanced Networking Test Center AG (EANTC) , of a range of virtual routing functions from Nokia Corp. (NYSE: NOK) (developed by the IP and Optical division of Alcatel-Lucent, now part of the Finnish giant).

The tests, conducted by a fiercely objective and experienced team, focused on Nokia's Virtualized Service Router (VSR) and Virtualized Mobile Gateway (VMG), two of the vendor's prime VNFs.

Figure 24:

The full details of the test plan, processes and conclusions are laid out in detail in the following pages. The key takeaway, though, is that Nokia has a set of VNFs that not only passed EANTC's exacting tests, but passed with flying colors across a variety of scenarios.

That's noteworthy, as a concern often mentioned by service providers in relation to VNFs is the possibility of a performance trade-off -- that a virtualized deployment will not be able to withstand the challenges associated with real-world traffic loads, node failures or cybersecurity threats, for example. The tests conducted by EANTC at Nokia's facilities will go some way to alleviating such concerns.

In addition -- and this is also important for the whole industry -- EANTC devised new test methods to evaluate vRouter and virtual route reflector (vRR) performance that are documented and repeatable and that will be shared with the relevant standards and specifications bodies.

You can find out more about the evaluation and get the chance to put questions to EANTC and Nokia executives during a live webinar, Evaluating the performance of Nokia Virtualized Service Router (VSR) and Virtualized Mobile Gateway (VMG), on Wednesday, February 17, 2016, 11:00 a.m. New York/4:00 p.m. London.

So let's get to the report, which is presented over a number of pages but which can be regarded as comprising four sections:

An introduction and evaluation overview;

Detailed analysis of the tests performed on the Virtualized Service Router - Provider Edge (VSR-PE) element and the key takeaways;

Detailed analysis of the tests performed on the Virtualized Service Router - Route Reflector (VSR-RR) element and the key takeaways;

Detailed analysis of the tests performed on the Virtualized Mobile Gateway (VMG) element and the key takeaways.

The report can be viewed in this multi-page online report or downloaded in a single PDF file: To access that PDF, click here.

Here's what you can find in the following pages:

Page 2: Introduction and executive summary (including key takeaways)

Page 3: Nokia VSR and VMG Technology components

Page 4: Virtualized Service Router — Provider Edge (VSR-PE): Test setup

Page 5: VSR-PE: Test results -- Lifecycle management

Page 6: VSR-PE: Test results -- Data plane performance

Page 7: VSR-PE: Redundancy

Page 8: VSR-PE: Denial of service test

Page 9: Virtualized Service Router — Route Reflector (VSR-RR): Test setup

Page 10: VSR-RR: Synthetic Route Reflector scalability

Page 11: VSR-RR: EVPN Route Reflection

Page 12: Virtualized Mobile Gateway (VMG): Test setup

Page 13: VMG: Lifecycle management

Page 14: VMG: Scalability and performance tests

Page 15: VMG: High availability

Page 16: VMG: Bearer/subscriber scale

— The Light Reading team and Carsten Rossenhövel, managing director, European Advanced Networking Test Center AG (EANTC) (http://www.eantc.de/), an independent test lab in Berlin. EANTC offers vendor-neutral network test facilities for manufacturers, service providers, and enterprises.

Introduction and executive summary

In late 2015, Light Reading commissioned EANTC to perform an independent evaluation of a range of Nokia's virtualized routing and gateway functions.

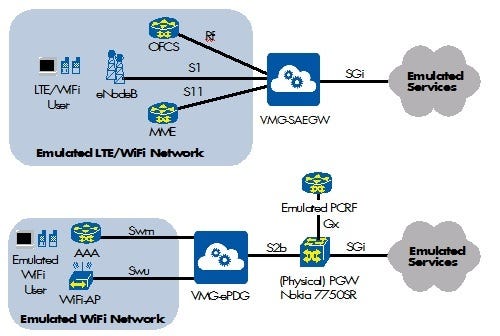

EANTC tested the Virtualized Service Router (VSR) for virtual Provider Edge (VSR-PE) and virtual Route Reflector (VSR-RR) applications. In addition, Nokia's Virtualized Mobile Gateway (VMG) was tested for various applications -- virtual System Architecture Evolution (SAE) Gateways (VMG-SAEGW), specifically Serving Gateway and Packet Data Network Gateway, and virtual Evolved Packet Data Gateway (VMG-ePDG).

EANTC validated performance, scalability and high availability capabilities in realistic test scenarios. Our team developed a detailed, reproducible test plan and executed the tests on site at Nokia's labs in Mountain View, Calif., in December 2015 and January 2016.

{videoembed|720887}

As we have all seen during the past few years, one of the main trends in the telecom industry is for vendors to create virtual versions of networking solutions that were formerly sold exclusively as hardware products or appliances. All of the major network equipment manufacturers offer virtual network function (VNF) versions of their product portfolio, responding to demand from their communications service provider (CSP) customers.

At EANTC, two questions guide our current evaluations of VNFs. First, how does a vendor such as Nokia re-architect software originally designed for purpose-built platforms to leverage the potential of NFV and cloud infrastructure while still meeting carrier-grade levels of scalability and resiliency? Second, how ready is a VNF solution in its entirety for real-world deployments that require functionality, performance, high availability and manageability in complex environments?

Such evaluations also provide an opportunity for the advance of test scenarios. In this exciting, fast-moving market, each test project is an opportunity to evolve test methodology beyond established rules: This report documents new test methods for virtual Provider Edge (vPE) and virtual Route Reflector (vRR) performance. EANTC will share the new test methods with the appropriate NFV standards bodies.

Executive summary

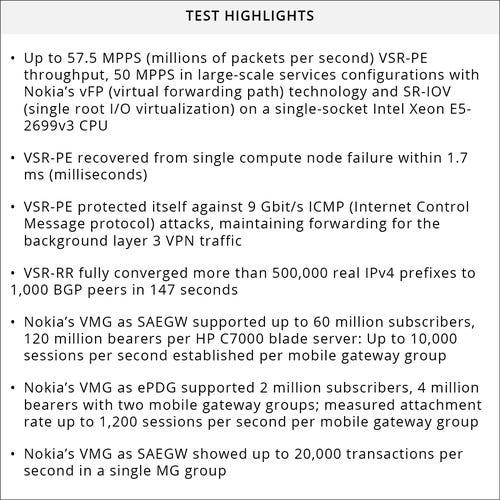

The key takeaways from the evaluation are as follows:

Nokia's VSR showed superior throughput in large-scale services scenarios using Nokia's virtual forwarding path (vFP) technology. The ability to simultaneously support a large number of Ethernet, IPv4 and IPv6 flows validates the readiness of these VNFs for real world deployments.

The VSR showed high throughput and scale even when multiple IP routing/MPLS and VPN services functions were enabled concurrently. High performance and scalability are crucial when deploying VNFs for delivery of business, mobile and residential services. EANTC evaluated how VSR can recover from compute node failures and how VSR can operate reliably during distributed denial of service (DDOS) attacks.

VSR-RR demonstrated extremely fast route convergence in large-scale, realistic scenarios, enabling new use cases for IPv4 and Ethernet VPN route management.

VMG (as SAEGW and ePDG) proved flexible support for large-scale mixed Internet of Things (IoT) and consumer environments. Modular scalability and high performance were demonstrated for number of subscribers, throughput, session attachment rates and WiFi handover. Figure 25:

Nokia VSR and VMG Technology components

The Nokia team, joined on site by Sri Reddy, VP, general manager of the IP Routing and Packet Core unit within Nokia's IP/Optical (ION) Business Group, explained the background of Nokia's technology and the key innovations related to the virtualized portfolio within the company's IP Networking group. Innovations such as virtual forwarding path (vFP) technology, Symmetric Multi-Processing (SMP) and 64-bit OS significantly improve performance and scalability for virtual network functions.Derived from the hardware forwarding path versions developed by the company over more than a decade, vFP has been extensively engineered for throughput optimization.

SMP is a multi-threaded software approach whereby different processes can be scheduled and run concurrently on different CPU cores for increased service scale and routing performance on x86 platforms.

The 64-bit software architecture enables access to more addressable CPU memory for applications that demand a lot of memory.

Armed with this knowledge (and high expectations), we began the throughput performance test cases.

As typical for virtual routers, Nokia decided to use SR-IOV (single root I/O virtualization) to attach the physical Ethernet ports directly to the virtual network function, bypassing any virtual switch/bridge. In addition, the VSR/VMG supports OVS-DPDK (Open vSwitch Data Plane Development Kit) and PCI passthrough as well, but these options were not tested by EANTC.

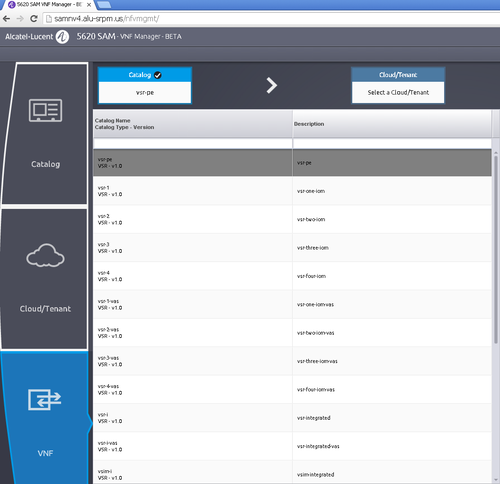

Lifecycle Management (LCM) is crucial for VNF deployment. VNFs can be managed by OpenStack's in-house tools, with Nova commands or Heat templates. However, this is a cumbersome method requiring knowledge of OpenStack and its command-line interfaces (CLIs). Nokia's 5620 SAM (Service Aware Manager) avoided this time-consuming process by supporting VNF lifecycle management in addition to its network and service management of Nokia's IP routing platforms.

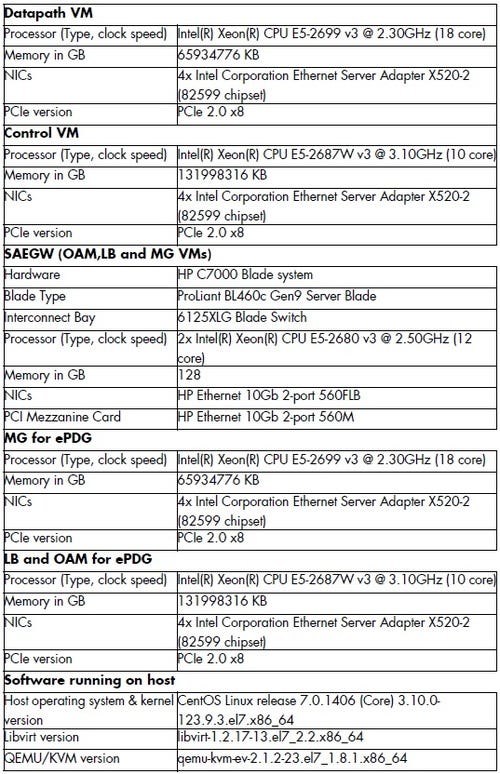

The hardware under test

Figure 1:

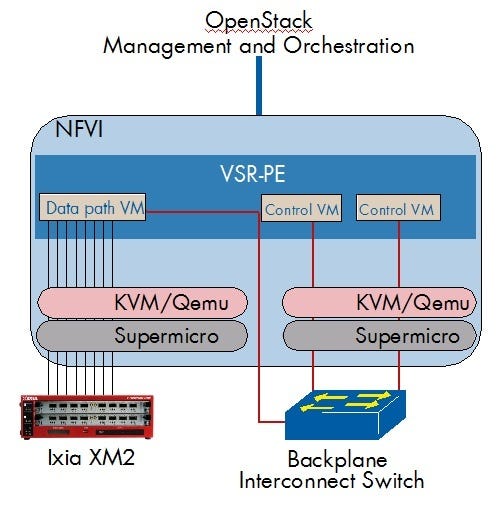

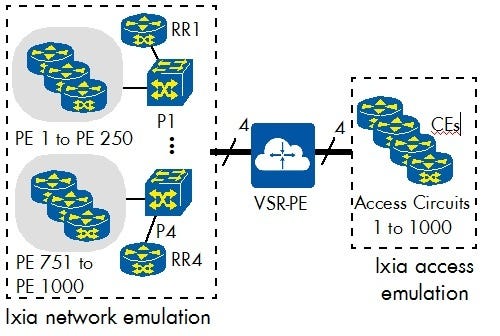

Virtualized Service Router — Provider Edge (VSR-PE): Test setup

Nokia instantiated the Virtualized Service Router VNFs on standard Supermicro servers running OpenStack Liberty. OpenStack was chosen in preference to a commercial infrastructure solution to demonstrate that VSR performs independent of any NFV infrastructure solution.VSR was provided as one data path VM implemented on a compute node with a single-socket Intel Xeon E5-2699v3 CPU and four 2-port Intel X520-2 10GbE (10 Gigabit Ethernet) cards, plus two control VMs implemented on a second compute node. In addition, OpenStack was configured with a control node running virtual infrastructure management tasks, as in all OpenStack installations.

In our test of the VSR-PE, we used two physical test topologies for each different test area, as shown in Figure 1 (below) and later in Figure 8.

Figure 2: Figure 1: Physical Test Setup VSR-PE

Nokia software version TiMOS-C-0.0.B2-4601 was used for all VSR-PE tests. Nokia 5620 SAM (Service Aware Manager), software version 13.0 Patch 6596, was used for VNF management and lifecycle management.

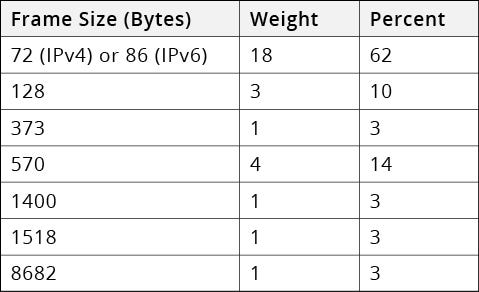

EANTC defined an IPv4 and IPv6 traffic mix ("IMIX") with a range of packet sizes to ensure a realistic traffic load for the data path VMs as shown in the following table:

Figure 20:

We executed all VSR-PE tests using an Ixia XM2 and tester ports of 10GigE.

VSR-PE: Test results – Lifecycle management

Key Takeaway: Nokia successfully demonstrated extensive lifecycle management support by the 5620 SAM's VNF Manager, including instantiation,scale-out, monitoring and alarms.

We started the test session by on-boarding and instantiating the required VNFs.

Step 1: Select VNF from the VNF catalog of all onboarded images (as seen in the Figure 2 screenshot below). Each item in the VNF catalog represents a Heat Orchestration Template (HOT), which defines the personality of the VSR/VMG. Multiple configurations were available (some with different VM personalities, additional Data Path VMs, redundant VMs). A Heat environment file was used to pass parameters into the HOT; these parameters included addressing, ports, and slot configuration.

Figure 3: Figure 2: 5620 SAM VNF Manager

Step 2: Select an OpenStack cloud, in this case the Liberty nodes in the Nokia lab.

Step 3: Verify and partially change the VNF settings such as flavor, management IP etc.

Step 4: Create and deploy the VNF.

Step 5: Scale-out the VNF by adding another Data Path VM to the virtual router.

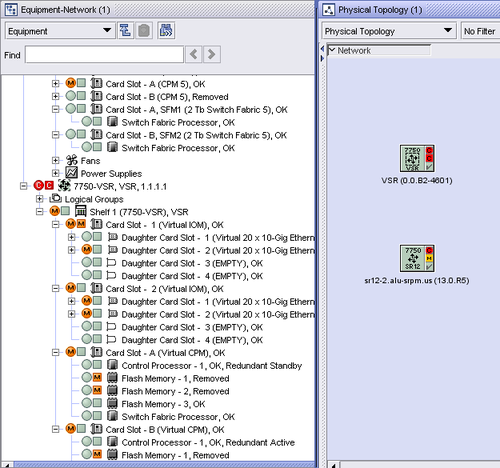

Step 6: Manage both a physical 7750 SR router and a VSR from the same 5620 SAM Element management system (as shown below in Figure 3).

Figure 4: Figure 3: 5620 SAM Element Management

During all test steps, we monitored the alarms and other notifications displayed by the 5620 SAM's element management system, comparing them with the status of the actual network elements as accessible with OpenStack tools and CLIs. The 5620 SAM displayed all relevant alarms and notifications that we were looking for and was always in sync with the actual state of the VNFs.

We also validated that the newly deployed VNFs functioned correctly, using data traffic.

It was reassuring to see that all the standard lifecycle management tasks above could be conducted using 5620 SAM, without the need to revert to OpenStack CLI tools, especially as we used vanilla OpenStack.

VSR-PE: Test results – Data plane performance

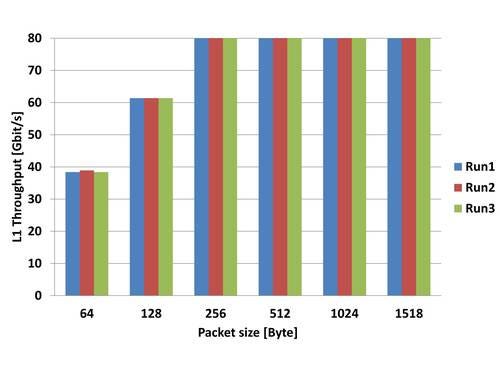

Key takeaway: VSR reached more than 57.5 million packets per second per single-socket Intel Xeon E5-2699v3 CPU for IPv4 and IPv6 traffic, forwarding at 80Gbit/s speed for any RFC 2544 runs with packet sizes of 256 bytes or larger.

Throughput benchmarking was conducted with Nokia's vFP (virtual forwarding path) technology and SR-IOV (single root I/O virtualization) on a single-socket Intel Xeon E5-2699v3 CPU.

The first task was to set a baseline. The throughput performance of virtual routers needs to be baselined, as it depends on complex interactions between standardized x86 hardware and vendor-specific software implementations.

In x86 environments, very minimal packet loss ratios are quite common due to the non-realtime nature of the environment: The state of the industry is such that it is now required to accept minimal packet loss ratios. We agreed with the Nokia team that 20 ppm (packets per million), or a packet loss ratio of 0.00002, was acceptable. As a result of that decision, EANTC will submit a proposal to the IETF to evolve RFC 2544 (industry standard benchmarking specifications for the testing of network devices) for virtual router testing.

With regards to the system under test (VSR), the theoretical maximum throughput was 80 Gbit/s, based on the number of Ethernet interfaces available. More important than the data throughput, however, is the number of packets forwarded; the router's effort is directly tied to the per-packet processing tasks.

We conducted multiple RFC 2544 throughput performance test runs, beefed up with 1 million IPv4 or IPv6 flows to make the test more challenging and more realistic for a service provider router scenario.

Figure 5: Figure 4: IPv4 throughput, 20 ppm loss tolerance

With 1 million IPv4 flows configured, the VSR achieved line rate of 80 Gbit/s full-duplex for test runs with 256-byte-sized and larger packets. Below this packet size, the VSR achieved a maximum packet processing rate of up to 57.5 MPPS (for 64-byte-sized packets) or 50.9 MPPS (for 128 byte-sized packets).

The results for single-frame size tests are expected and acceptable; the average packet size measured at various Internet exchanges and similarly average packet sizes reported by service providers for their VPN services are typically around 500 bytes (and growing).

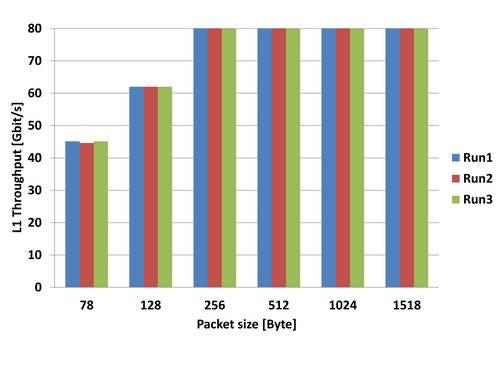

Figure 6: Figure 5: IPv6 throughput, 20 ppm loss tolerance

The IPv6-only throughput with identical packet loss tolerance of 20 ppm shows almost exactly the same results as the IPv4-only test, reaching line rate with any packet size of 256 bytes or larger and forwarding a maximum of 57.5 MPPS (for 78-byte-sized frames).

This confirms that Nokia's vFP technology can forward IPv6 and IPv4 packets equally well. Furthermore, the VSR maintained its high level of forwarding performance irrespective of the number of flows traversing the vFP (tested up to 2 million).

Throughput with a large number of services

Key takeaway: VSR reached 79.5Gbit/s throughput in a realistic service configuration with 2,250 IP and Ethernet VPN instances, 200,000 MAC addresses and 3.4 million IPv4/v6 routes providing 2 million traffic flows. The minimum/average/maximum latency for any frame forwarded across the Ethernet and IP services were 13 μs/129 μs/1474 μs.

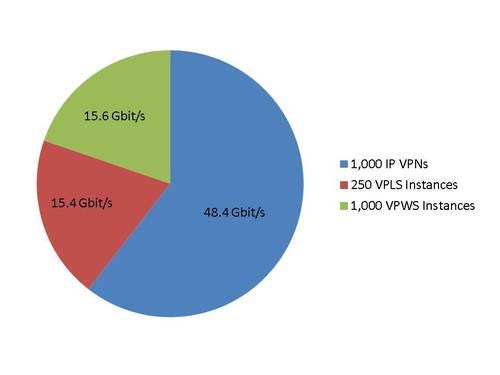

Once the basic throughput had been confirmed, we asked Nokia to configure a large and realistic number of services on the VSR, including IP VPNs and Ethernet point-to-point (VPWS, virtual private wire service) and multipoint (VPLS, virtual private LAN service) services, each with realistic data plane and control plane scale.

Figure 7: Figure 6: Full-scale test logical setup

Nokia agreed to configure a scenario with 1,000 IP VPN services, 1,000 Ethernet point-to-point services and 250 Ethernet multipoint VPN service instances.

The VSR carried a total of 1 million BGP VPN routes plus another 5 million iBGP IPv4 routes. Furthermore, there were IP filtering rules and QoS ingress 'policers' and egress queues configured.

The EANTC team configured the Ixia emulator as shown in the figure above to terminate all these services and forward 79.5 Gbit/s of traffic equally distributed across all services (IMIX packet sizes). In a second test run, we confirmed the maximum packet rate with smaller packets that did not saturate the line rate (173-byte-sized); in this case the VSR was able to transit more than 50 million packets per second (MPPS).

All parameters are summarized in the table below.

These results confirm Nokia's claims: The VSR is able to scale to realistic, large multi-service edge routing scenarios, showing superior performance of the data plane and control plane.

Figure 21:

By any measure, this configuration was very realistic and representative for a provider edge router transporting a range of fixed and mobile services.

Figure 8: Figure 7: 79.5 Gbit/s Throughput With Services

Despite the additional memory and computing overhead required to maintain all these services, the VSR did not show any service degradation: The total throughput reached 79.5 Gbit/s. Due to MPLS and dot1q overhead encapsulations, we couldn't configure the traffic generator to send exactly 80Gbit/s traffic. The minimum/average/maximum latency for any frame forwarded across the Ethernet and IP services were 13 µs/129 µs/1474 µs.

Traffic classification

Quality of service is implemented by detecting and classifying traffic into classes and subsequently forwarding these classes through the configured policers, queues and shapers for each class while managing rates and congestion. In physical aggregation routers, this function is always implemented by specialized hardware to handle its real-time nature, accuracy requirements and latency sensitivity.However, an x86 processor does not have access to specialized hardware functions. Nokia explained that the QoS architecture of its hardware products has been ported to vFP technology, providing identical classification functions and very similar congestion management capabilities.

In this test case, we configured and verified traffic classification for ingress policers, egress queues and scheduler per service and validated that the VSR forwarded traffic correctly in the respective policers and queues according to the received marking. We verified rate limiting by applying a limit on policers applied for a group of services and observed that the forwarding rate for those services was matching the rate applied.

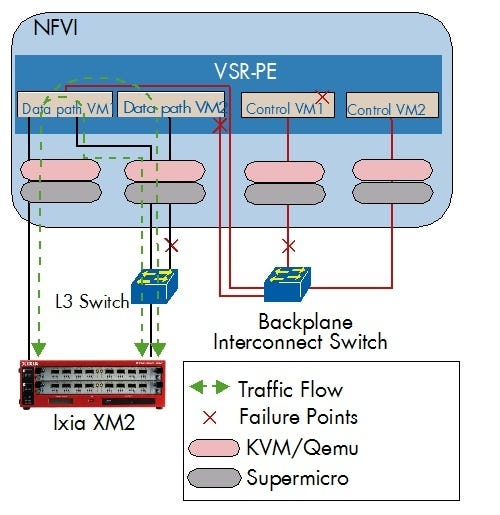

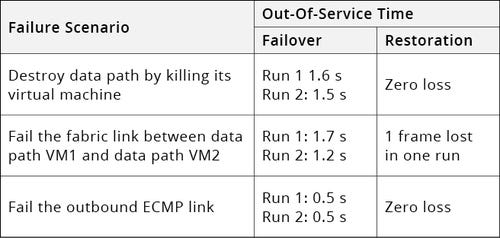

VSR-PE: Redundancy

Services need to be highly available so that CSPs can maintain committed service levels, irrespective of whether virtual or physical network functions are used to deliver these services.In the physical world, we have typically tested link and node failures, making sure there was no single point of failure. In the virtual world, there are additional test scenarios validating the redundancy of virtual software components that we focused on in this test session.

Internally, the VSR implementation is split into the component types -- control VM and data path VM. Each of these need to be protected against failure in different ways.

Figure 9: Figure 8: Failover scenarios

Control VM failover

First, we tested the control plane's support for resiliency. Obviously, a secondary control VM needs to be up and running, as shown in the logical diagram above, independently of whether a failover was initiated gracefully using the command-line interface, or forced by killing the virtual machine running the active control plane.In both cases, VSR continued to serve all sessions without any noticeable 'flapping.' We measured 17ms as the maximum out-of-service time when killing the active control VM and 2ms of out-of-service time by gracefully switching over the control VM via CLI.

Data path VM redundancy

Data path VMs can fail as well, affecting services in a number of ways. Since the data path VM implements outbound ports, the test configuration had to take some port redundancy control protocol into account. Nokia chose OSPF equal-cost multipath (ECMP). An external L3 switch as depicted in the diagram above was installed to avoid exhibiting the ECMP protocol to the Ixia test equipment. In addition, a Bidirectional Forwarding Detection (BFD) session with 100ms timer was configured between the L3 switch and VSR to trigger link failure.We failed and restored the data path VM infrastructure in three different ways: Each test case was repeated twice to check for consistent test results.

Figure 22:

VSR-PE: Denial of service test

Key takeaway: VSR successfully protected itself against 9Gbit/s ICMP attack traffic, continuing to forward 42.8Gbit/s background traffic without loss while maintaining less than 72% CPU load.

Massive distributed denial of service (DDOS) attacks can generate a huge flood of incoming packets directed to a router's CPU, effectively halting all control plane activity. In a virtual router without specialized forwarding hardware, a DDOS attack could even halt the data plane.

It is very important for virtual routers to protect themselves against such attacks. Nokia's VSR implements DDOS protection by specialized front-end queuing algorithms designed to drop large quantities of malicious traffic efficiently.

At the time of the test, Nokia informed us that the current VSR software already supported the detection of an ICMP (Internet Control Message Protocol) attack destined for the VSR's control VM. Detection for other types of traffic will be gradually added, according to the Nokia team. We configured a large amount of ICMP attack traffic (ICMP echo and echo reply) to test the DDOS protection.

The test started by exchanging 42.8Gbit/s regular background traffic. We then added 9Gbit/s ICMP attacks, which the VSR adequately dropped. The background traffic continued to be forwarded without any drops or session flaps. In the process, the average CPU utilization went from 3% to 58%; the busiest core never exceeded 72%.

The ICMP attack prevention worked impressively, especially as all queues are implemented in software.

Virtualized Service Router — Route Reflector (VSR-RR): Test setup

A route reflector (RR) is a BGP (border gateway protocol) router functioning as a central hub for the exchange of route prefix updates in large networks. Nokia's VSR can be deployed as a route reflector -- in such cases, Nokia calls it the VSR-RR. Nokia used different software version TiMOS-B-13.0.R6 for the VSR-RR test, since this VNF is commercially available.Traditionally, route reflectors have been implemented as hardware routers with large memory and fast CPUs. There is a benefit of having (redundant) RRs peering with a lot of neighbor BGP routers to reduce the route calculation work load for non-reflecting routers and to speed up route distribution. The scalability of such RRs was usually limited by the scale of the underlying hardware router.

This, then, is a perfect use case for a virtual router that can scale to very large memory and CPU power. The main goal of our evaluation was to test if the RR software would scale linearly to support such large scenarios.

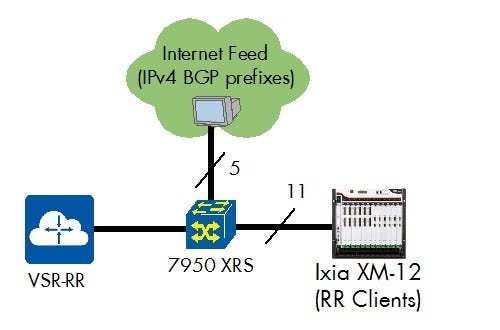

The figure below shows the basic physical test setup: The VSR-RR was connected to a real Internet BGP feed for IPv4 routes and to many emulated routers on an Ixia test system. We executed all VSR-RR tests using an Ixia XM12 test chassis and tester ports of 10GigE. The Ixia test equipment emulated the network (up to 1,000 routers as RR clients).

Figure 10: Figure 9: Physical Route Reflector Test Setup

Each of the RR clients received the same copy of the master routing table.

VSR-RR: Synthetic Route Reflector scalability

Key takeaway: 40 million synthetic IPv4 prefixes to 100 client peers each; fully converged within 308 seconds (13 million route updates per second)

In a first step, we employed the traditional test methodology: We created synthetic IPv4 prefixes -- that is, prefixes that are consecutive and all have a limited BGP path distribution. In fact, we created a huge number of these prefixes: 40 million reflected to each peer. This is around 80 times the size of the Internet IPv4 routing table. With 100 peers, there was a total number of (non-unique) 4 billion routes in the network!

The VSR-RR converged really fast: It took just 308 seconds (slightly longer than five minutes) to distribute all routes to all peers. We verified successful convergence by sending data to each of the prefixes. The virtual router required 14GB of memory to complete this task.

However, the Nokia expert and EANTC team on site pointed out that this performance result cannot be achieved in production networks: real-world IPv4 BGP prefixes, as seen in the Internet routing table, are far more complex to process due to their extensive BGP path distribution (AS Path, next hops). For a long time, such synthetic routes have been the standard for RR testing -- but service providers were often surprised by the much longer convergence times in their commercial operations than they had measured in the lab.

We decided to advance the test scenario in a significant way: In the next, much more realistic test setup, we provided a copy of a true IPv4 Internet BGP feed (currently containing 515,858 prefixes) to the RR as the master database. These routes are not contiguous and contain approximately 71,900 unique BGP paths. Obviously, we expected the introduction of a realistic scenario to have an impact on the results.

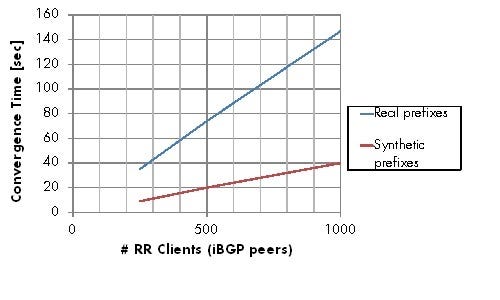

We ran a series of test runs with 100, 250, 500, and 1,000 client peers and compared the results with similar runs for the same number of synthetic prefixes.

Key takeaway: VSR-RR successfully advertised 515,858 real IPv4 Internet prefixes to up to 1,000 client peers; fully converged within 147 seconds.VSR-RR scaled linearly, showing 3.2–3.5 million route updates per second.

There are four important findings:

1. VSR-RR processed more than 3.2 million route updates per second for real world prefixes at scale.

2. The implementation scaled linearly: With just 100 peers, it behaved as fast as with 1,000 peers.

3. VSR-RR used all available CPU cores equally, load-balanced between 74–90% of their capacity, ensuring that all capacity was properly used without stalling any tasks.

4. For comparison with historic measurements, VSR-RR performed in the range 12.8–14.3 million route updates per second for synthetic prefixes at scale.

Figure 11: Figure 10: VSR-RR IPv4 Convergence Results

The route reflector required 0.63GB for realistic prefixes to 250 peers.

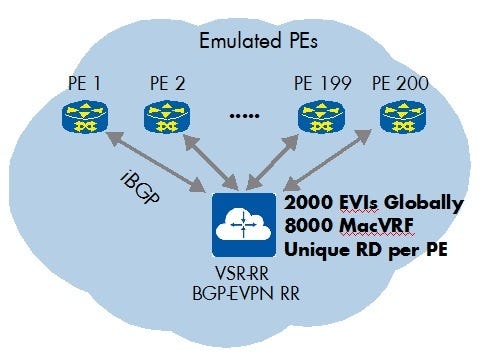

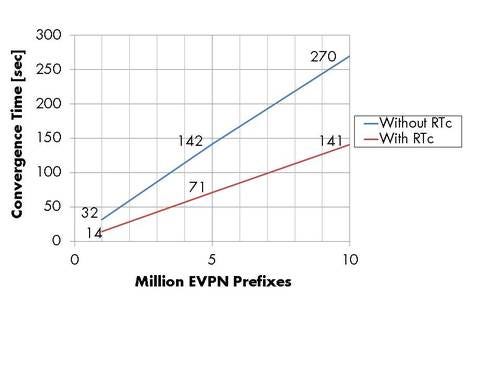

VSR-RR: EVPN Route Reflection

Ethernet VPN (EVPN) is the next generation of Ethernet services that is growing in importance in the industry, as these services offer sophisticated access redundancy combined with L3 VPN-like operations for scalability and control. But EVPN routes -- which are essentially Ethernet MAC addresses -- are conveyed over BGP.This configuration provides a solution for a long-standing issue with Ethernet services across service provider backbones: scalability to many multi-point Ethernet service instances with a lot of Ethernet MAC addresses each. It is just much more efficient and scalable to use the BGP control plane for Ethernet address distribution than it is to use the service's data plane.

We asked Nokia to configure an EVPN route reflection scenario. Usually, EVPN and IP routes would be mixed; we decided to separate the two scenarios to measure proper baseline figures.

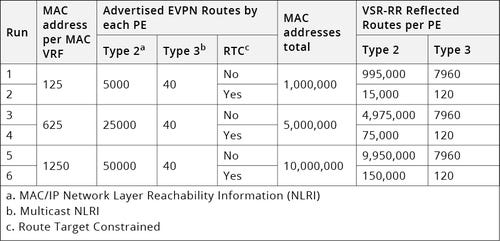

Key takeaways:

– VSR-RR successfully advertised 10 million MAC addresses (EVPN routes) to 200 client peers, requiring 8 GB of memory

– VSR-RR fully converged within 141 seconds (with route target constraints) or 270 seconds (no RTc). VSR-RR scaled linearly, showing 7.4 million EVPN route updates per second.

– VSR-RR reflected up to 1 million EVPN prefixes to all 200 peers in 32 seconds without RTc and 14 seconds with RTc; RTc reduced the convergence time in average by half.

Complementary to this test we also asked Nokia to test with Route-Target Constraints (RTc) specified in RFC 4684. RTc is a BGP mechanism that allows the route reflector to send only individually required prefixes to each PE. It helps to save PE resources, reduce convergence times and increase scale, which is obviously a significant benefit for EVPNs.

We tested with 2,000 EVIs (Ethernet VPNs) distributed among 200 peers, with each EVI being defined in up to 4 PEs.

This gave us 8,000 MacVRF globally, with each of them defined with: 125, 625, and 1,250 MAC addresses.

Figure 12: Figure 11: VSR-RR EVPN Logical Setup

The route reflector required 8GB of memory to maintain 10 million MAC addresses distributed to each of the 200 peers.

As shown in the table below, we performed the tests by advertising different sets of EVPN routes including RTc enabled and disabled.

Figure 23:

Figure 13: Figure 12: VSR-RR EVPN Route Convergence

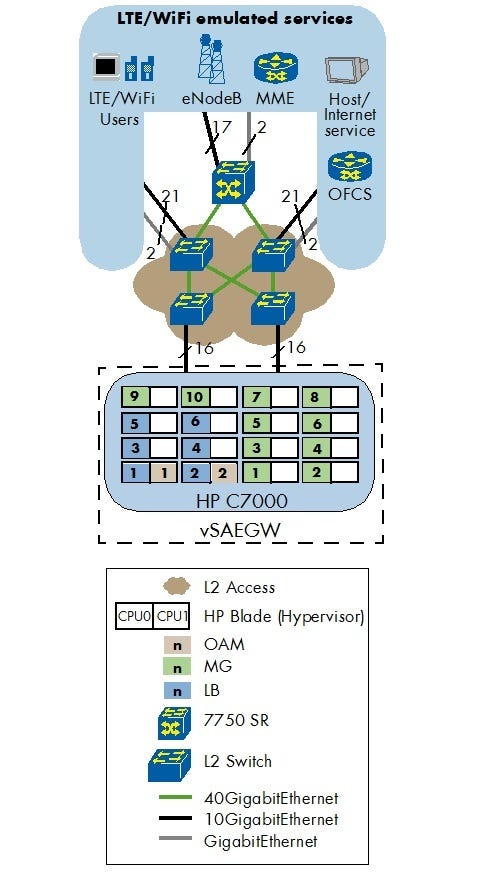

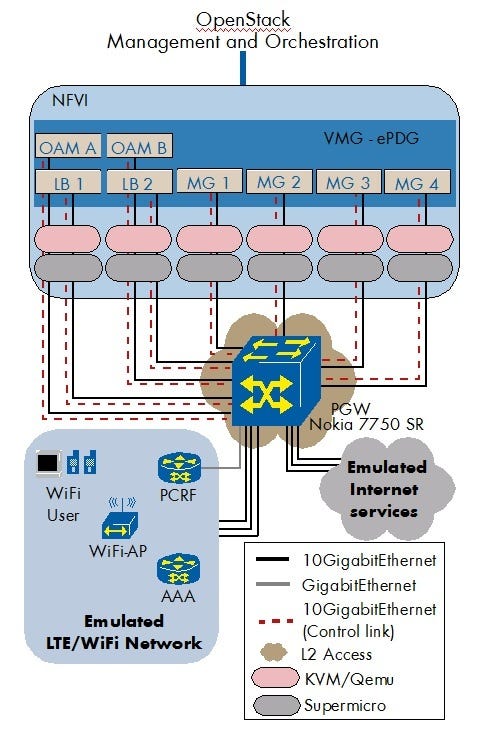

Virtualized Mobile Gateway (VMG): Test setup

Nokia informed us that the Virtualized Mobile Gateway (VMG) uses the same code base as the VSR, specifically regarding packet forwarding technology (vFP). Likewise, VMG is modularized into multiple virtual machines for the control and data plane forwarding, allowing it to scale and adapt to different use case scenarios.The EANTC test focused on a mixed use case:

Large fraction of emulated machine-to-machine (M2M) subscribers with low data throughput per subscriber

Smaller fraction of emulated consumer subscribers with high data throughput per subscriber

This mixture is more challenging than a pure consumer scenario because of the ratio of control plane traffic and large number of subscribers.

The VMG tests followed a journey:

from lifecycle management:

through data plane performance and scalability;

and bearer/subscriber scale;

to high availability.

We ran most of the tests with two VMG configurations: first, as an SAEGW (3GPP-defined System Architecture Evolution Gateway), where VMG implemented the Serving Gateway (S-GW) and Packet Gateway (P-GW) functions; second, as an ePDG (Evolved Packet Data Gateway), where the system under test secured data transmission with subscribers connecting via untrusted WLAN (terminating IPsec tunnels).

In both cases, Nokia used TiMOS-MG-C-8.0.B2-19 software and OpenStack Liberty.

Nokia supplied a HP C7000 blade system with a total of 32 CPUs for the SAEGW test and standard Supermicro x86 servers for the ePDG test. There was no specific reason for the hardware selection; the SAEGW test could have been executed on other x86 hardware as well.

Figure 14: Figure 13: VMG Configuration for SAEGW

Nokia chose Spirent's Landslide as the test equipment. A large number of Landslide units were engaged by Spirent support, as the VMG scaled more efficiently than the test equipment, yet the IPv6 traffic ratio was still limited. By conducting reference tests, using internal Nokia test tools for emulation, EANTC concluded that the VMG was not the limiting factor.

In the SAEGW case, Nokia configured the SAEGW, which operates as a fully distributed network of multiple VMs. SAEGW consists of three components:

Operations, Administration and Maintenance (OAM) VM: Performs the control plane functions for the VMG, including management of individual VMs, routing protocols and support of the management interfaces including SNMP/SSH/CLI.

Load Balancer (LB) VM: Provides external network connectivity (input/output) to mobile gateway function and load distribution across Mobile Gateway VMs. It forwards GTP-C/GTP-U and user equipment (UE)-addressed packets to the Mobile Gateway VM.

Mobile Gateway (MG) VM: Performs the 3GPP Call/Session Processing and Bearer Management Functions (Control and Data Plane), Policy and Charging Enforcement Function (PCEF).

In the SAEGW test, Nokia configured 2 OAM VMs, 6 LB VMs and 10 MG VMs, as shown in Figure 13 above. The MG VMs were configured as 1+1 redundant mode, which provides 5 MG groups in total. Nokia allocated 11 cores for each VM and used SR-IOV to connect the virtual network functions with network hardware. In addition, an Ethernet switching and IP routing infrastructure was setup to reflect what an operator network design could look like.

Figure 15: Figure 14: VMG Configuration for ePDG

The configuration for ePDG consists of the same OAM, LB and MG VMs as the SAEGW. In the ePDG test, Nokia allocated 17 cores for MG VMs, 8 cores for LB VMs and 4 cores for OAM VMs.

VMG: Lifecycle management

We began by running ran a couple of provisioning activities. As before, we used the Nokia 5620 SAM integrated element manager and VNF manager functions. It proved to be an efficient and insightful graphical user interface for day-to-day operator functions.Step 1: Initially, we created an SAEGW instance on multiple virtual machines. The internal structure of the VMG is not trivial, particularly in relation to the internal communication paths between the virtual machines; in addition, the correct number of components need to be established depending on the respective use case. As part of the element manager function, 5620 SAM loaded the application layer configurations for all components as well.

Step 2: Next, we checked IP connectivity of the VMG instance manually to verify that 5620 SAM had set up everything correctly.

Step 3: Covering the second main use case of this test session, we created an ePDG instance on multiple virtual machines. The components required to be instantiated and configured similarly to step 1.

Step 4: Finally, we checked the IP connectivity of the ePDG instance as a proof point of the correct 5620 SAM functions.

As expected, 5620 SAM completed all these functional evaluation steps successfully.

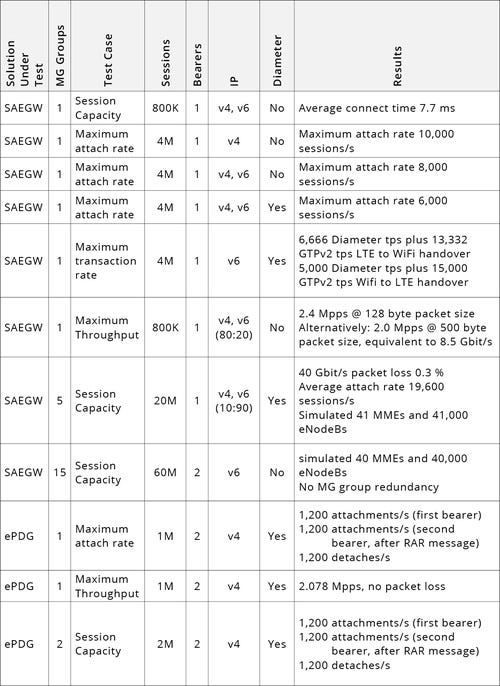

VMG: Scalability and performance tests

For the SAEGW and ePDG tests, we baselined four performance parameters:Maximum session capacity

Maximum attach rate

Maximum transaction rate

Maximum data plane throughput

Initially, these tests were conducted with just one MG group (using one CPU socket) plus LB and OAM VMs on other CPU sockets. The main reason was to baseline the performance for a minimum of compute resources.

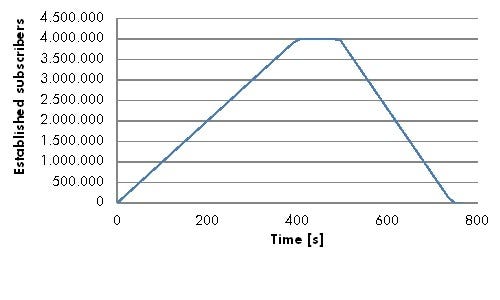

Spirent Landslide was configured to emulate a total of 12 MMEs (mobile management entities) with 1,000 eNodeBs per MME; on each eNodeB, there were 800 subscribers (user equipment instances) emulated. In total, this created a scenario with 12,000 eNodeBs and 800,000 subscribers.

In all test runs, we configured Landslide to attach subscribers, create public data network (PDN) sessions and send data in a ramp-up/ramp-down model as shown in the diagram below. Nokia configured some of the scenarios with and without Diameter to show the difference.

We configured Landslide to establish sessions at various speeds: In some test cases, we looked for the maximum attach rates so high session setup rates were used; In other test cases (such as the session capacity one), average setup rates were configured as shown in the figure below.

Figure 16: Figure 15: SAEGW Session Scalability for one MG group without Diameter

The table at the foot of this page summarizes all the test cases with their varying parameters, but here are some highlights with some background commentary:

Session capacity

Key takeaway: SAEGW showed a capacity of 4,000,000 sessions per MG group. The ePDG was tested with 1,000,000 sessions per MG group.

The maximum session capacity is most important for Internet of Things (IoT) scenarios, which will usually include a very large number of devices connected to the mobile network. In our test, we assumed that the number of IoT devices in a future network would represent 90% of all subscribers connected.

Maximum attachment rate

Key takeaway: One MG group of the SAEGW was able to set up 10,000 sessions per second with a single bearer. One MG group of the ePDG managed to establish 1,200 sessions per second with two bearers each.

Mobile networks are not static. Constantly, subscribers are on the move, drifting in and out of mobile network coverage and switching between 3GPP radio access network types (GSM, 3G and LTE). For each of these activities, sessions need to be re-attached. In addition, large-scale attachments might happen after a partial network failure, whether related to a single base station, a set of basestations, an MME or an even larger part of the network.

The SAEGW needs to be prepared to handle a large amount of attachments in a short time: The attachment rate is a key performance aspect. We measured the maximum attachment rate with preset values of 6,000, 8,000 and 10,000 sessions per second.

The ePDG needs to be prepared for a large rate of attachments, too, as subscribers move between WiFi and 3GPP access points. For the ePDG, we tested dual-bearer scenarios in support of Voice over WiFi (VoWiFi). Each subscriber sets up two sessions -- one for voice, one for data.

Figure 17: Figure 16: Physical Test Setup for VMG Tests

Data throughput

Key takeaways:

– Per MG group, the SAEGW scaled to 2.4 million packets per second; with 500-byte sized packets, it reached 8.5 Gbit/s throughput

– Per MG group, the ePDG scaled to 2.0 million packets per second

Data plane forwarding performance evaluation is one of the classic test cases for mobile packet gateways. In a mixed IoT scenario, the throughput per subscriber will be much lower than in a pure consumer scenario. However, since VMG scales to a large number of sessions, the throughput needs to scale accordingly.

This was an opportunity for vFP, Nokia's virtual forwarding processor, to be put to work again. (See introduction section for more details on vFP.)

We tested both for maximum number of packets per second and for throughput in gigabits per second, using a realistic average packet size. The number of packets metric is more important, as the total data throughput per MG group was limited by the physical 10GigE interfaces. Figure 18:

VMG: High availability

Key takeaways:

– SAEGW implements resiliency of LB and MG VMs against component failures, data and fabric link failures

– During failover, the SAEGW maintained all sessions; data path failover time was less than 1 second

As a final test we emulated selective outages of different types of VMG components, forcing the system to failover to a backup component. We performed three types of LB and MG failover tests each:

Tearing down LB and MG VMs by issuing an OpenStack 'nova stop' command

Failing a data link attached to the LB VM

Failing a fabric link attached to the LB and MG VMs

Clearing the active MG VM from CLI

All sessions were maintained by the SAEGW during the whole test. The measured maximum failover time was around 1 second.

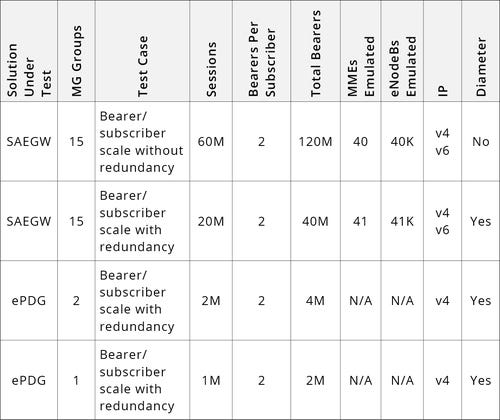

VMG: Bearer/subscriber scale

Key takeaways:

– SAEGW scaled to 60 million subscribers, 120 million bearers in a non-redundant scenario

– Soak test confirmed stable operation for 9.5 hours with 2.4 Gbit/s throughput (7.4 MPPS) and 0.008% data loss

– ePDG established 1 million sessions with 2 million bearers and sent traffic at 2.078 MPPS (4 Gbit/s) for 200,000 subscribers without loss

For this test scenario, we evaluated how many subscribers a single MG VM, or a larger group of MG VMs, can maintain.

Most of the tests were conducted for a short time period only, but we did run one test overnight in an effort to confirm the long-term stability of the implementation -- VMG passed that test easily.

Typically both IPv4 and IPv6 traffic was used, reflecting the current growing volume of IPv6 traffic in mobile networks.

Figure 19:

You May Also Like

_International_Software_Products.jpeg?width=300&auto=webp&quality=80&disable=upscale)