There is a widespread view – verging on the religious – that radio access networks (RANs) will one day be "cloud native." I'm no heretic, but what do we mean by cloud native RAN? In practice, the industry lacks a solid definition, but there’s a lot of hand waving and interpretation going on.

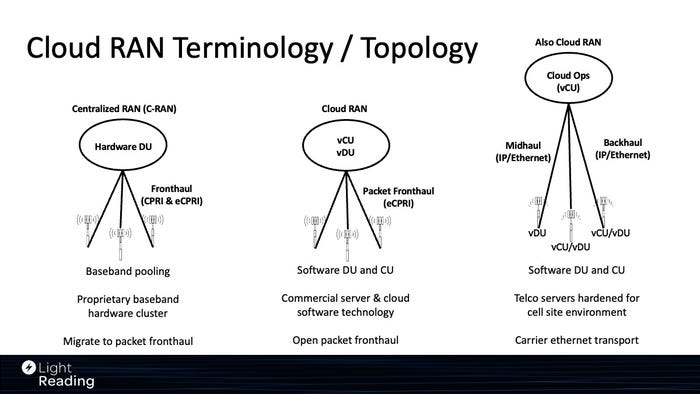

In my opening remarks at the Cloud Native Open RAN session at this year's Light Reading Open RAN Symposium, I set out a terminology to frame the discussion. As shown in the figure below, this framework generates a taxonomy based on the deployment location of baseband processing functions.

Deployment topology determines many key RAN decisions – for example, on physical site requirements (footprint, power, cooling, aesthetics, etc.), transport (fronthaul, midhaul, backhaul, etc.), server hardware (ruggedized, racked, etc.), merchant silicon choices (accelerators, CPUs, etc.) and cloud software (containers/middleware, etc.). Perhaps most importantly, it also impacts RAN operations. And because the baseband drives a lot of the performance functionality of a RAN, it is at the center of the deployment topology decision.

(Source: Heavy Reading)

Centralized RAN – its time will come

To the left of the figure is "centralized RAN" (C-RAN), a deployment architecture that has been around for close to two decades to my recall, but probably longer. Classically, a centralized baseband is connected over dark fiber CPRI links to radio units at the antenna site. This architecture is "cloud-like" in the sense that the baseband is a pooled resource that can be multiplexed between radio sectors. Centralization can also enable some radio and mobility performance gains thanks to tight coordination between sectors.

The downside of classic C-RAN is that it requires point-to-point fiber pairs and very well-engineered optical transmission. The system is based on proprietary baseband hardware/software units; in this sense, it is far from being "cloud native." C-RAN is used fairly often in dense urban scenarios in South Korea and Japan. It is not widely used elsewhere, but most large operators have at least had a trial or commercial pilot of this architecture.

With the addition of packet fronthaul (eCPRI), C-RAN is more deployable. Packet fronthaul compresses the data stream and enables transport over Ethernet. Hence, the operator can multiplex multiple radios onto one optical Ethernet transport link between the radios and the hub site (rather than multiple fiber pairs per radio head, as previously). This is a big win.

Packet fronthaul needs high performance Ethernet transport – it will not run on a regular switched Ethernet service designed for IP backhaul – which, while technically solvable, is currently economically challenging for most operators.

Centralized vRAN – a.k.a. cloud RAN

To the center of the figure is an evolution of the C-RAN physical topology. The baseband is still centralized (which means aggregated at the edge site a few kilometers from the antenna site) but is now virtualized/containerized. That is, the virtualized central and distributed unit (vCU and vDU) functions (and/or the microservices that constitute them) are deployed on open server hardware.

Baseband virtualization/containerization comes with packet fronthaul and, ideally, open packet fronthaul. As a side note, the packetization and compression of digital RF introduces a tiny amount of latency, and this is one reason why the additional open fronthaul specifications are being developed for massive MIMO in 5G.

This architecture can deliver really great performance, rapid software updates and low opex. It is, more or less, what Rakuten Mobile has deployed in Japan and can legitimately be called cloud RAN.

We should expect an evolution of this architecture in the medium term. Currently, RAN workloads are deployed on dedicated servers/racks optimized for RAN. At the time of writing, we are not aware of vRAN being deployed as a workload on a multi-tenant cloud infrastructure. In this sense, this architecture fails the “cloud native” test. Nvidia talks about "cloud RAN" versus "RAN in the cloud" to make the distinction.

How cloud infrastructure evolves to handle RAN has lots of implications. Silicon suppliers, server vendors, cloud software suppliers and hyperscalers all have development work to do to meet the demands of RAN. The good news is that cloud infrastructure, in general, is evolving to support specialist workloads. The similarities between RAN and other workloads that benefit from hardware acceleration – e.g., encryption, packet processing, media transcoding and AI – illustrate this. There is now a line-of-sight to cloud infrastructure better suited to RAN and to RANs better suited to cloud.

Distributed cloud RAN

Another approach is to use cloud technologies to deploy a distributed RAN. In this version of cloud RAN, the vDU (and/or vCU) is deployed at the cell site to mirror the current dominant distributed topology.

The CU and DU software is deployed as cloud native network functions (CNFs) running in containers on a telco commercial off-the-shelf (COTS) server. This server is typically deployed in a cabinet at the site and therefore needs to support the same features as a classic baseband appliance (e.g., sync, lifespan, reliability, environmental robustness, etc.). Each server also needs its own cloud software instance (essentially a Kubernetes cluster). This generates quite a different set of requirements than hosting baseband in an edge data center in a centralized cloud RAN topology. These requirements are why server vendors and hyperscalers are now developing "RAN servers" for cell sites and other remote, far-edge locations.

A major benefit, in theory, of this approach is that the RAN hardware and software are managed by a cloud management system, which enables a "cloud ops" model for RAN, with the advantages of fast updates, greater automation and so on. Operators can source management tools from cloud infrastructure vendors (Wind River, VMware, Red Hat, etc.), from diverse independent cloud software vendors and from open source.

Although they don't historically play at the telco far edge, hyperscalers have an advantage in that they have mature tooling proven to drive low cost operations. And because they offer powerful "cloud platform services" – for example, analytics services that can be applied to the RAN domain – hyperscalers have another card to play (albeit a risk to the operator as a new form of vendor lock-in).

This distributed model does not support resource multiplexing because a server is dedicated to each site. However, in the future, operators may be able to run, say, a disaggregated cell site gateway on the same server as the RAN and use the same cloud operating tools to manage it. And one other caveat: in private networks deployed at the customer site, there is greater scope to share server resources between other workloads and vRAN.

There are two variants of this cloud native distributed RAN topology: a centralized CU and distributed DU (essentially the DISH model) and one with both the CU and DU distributed to the cell site (Vodafone is focused on this model). The great thing in each case is that carrier Ethernet is good for both midhaul and backhaul transport.

Which of these models will be most widely deployed in the medium term is hard to call. If forced to make a prediction, I would go with the distributed cloud RAN topology. But as fronthaul transport networks advance, I have a feeling that the use of centralized cloud RAN will pick up in dense urban networks over the next five years.

To view the recording of the November 2023 Light Reading Open RAN Symposium, click and register here.

About the Author(s)

You May Also Like