5G has the potential to be a revolutionary wireless technology but the high-band spectrum that is one of the cornerstones of the next-generation standard will require operators to take a much different approach than they have previously to building 4G networks and other cellular upgrades that went before.

Let's review. The first-generation mobile network in the 1980s brought analog voice to the masses. The advent of integrated circuit and digital signal processing enabled 2G in the 90s, which made digital voice available and increased network capacity dramatically. 3G at the turn of the century, combined mobile data with voice, and now customers could make a phone call while replying to email. Enter the 2010s, and 4G is all about the wireless Internet at higher speed, and desktop applications have finally arrived on smartphones.

Nonetheless, the communications industry and customers are still segmented. We have wireline/Internet providers, cable TV and Internet service providers, wireless operators, and over-the-top application providers. Consumers and businesses get connections from various operators and on different platforms that often don't even talk to each other. There is significant overhead in the networks, and they must allocate substantial resources just to manage these overheads, hence signaling, billing, and device management systems.

For the end user, 5G is a connected application ecosystem. Each application will adaptively manage data speed, latency and reliability based on the tasks required. For example, for an autopiloted car, which requires very reliable, instant response and a secure link at highway speeds, a 5G network will provide wide coverage, small latency, and an encrypted communication link instead of blindly assigning a 100MHz channel for the car, because higher throughput is not equal to short latency and reliable coverage.

To service providers, 5G will consolidate communication systems under one roof to meet end-user application needs, such as data, voice, video, Internet of Things and crucial communications. 5G will provide much higher throughput, ultra-low latency, dramatically increased network capacity, reliability and secure services.

So, what are the 5G benchmarks? In general, 5G network architecture should provide:

Massive capacity: 1,000 times more than 4G

Super-fast data rate: 100 times more than 4G

Ultra-low latency: Less than 1 millisecond

To achieve these goals, network and user equipment manufacturers must invent new technologies to make the network drastically more efficient and deploy a new spectrum to support much wider bandwidth requirements.

Millimeter wave band for 5G deployment

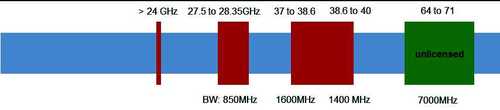

5G requires much higher bandwidth, as much as 800MHz to 2GHz. The frequency bands that have the most striking potential are millimeter bands. When satellite communication started to deploy Ka-band -- 26.5GHz to 40GHz -- it increased channel bandwidth from a typical bandwidth of 54MHz to between 500MHz and 2GHz, accompanied with spot beam frequency reuse. It can achieve gigabit IP connections. 5G will need to do the same thing.Figure 1:

In October 2015, the FCC allocated three mmWave bands for 5G services; these bands are called frontier spectrum for 5G services. There is more spectrum under investigation above 24GHz.

The 28GHz band supports 850MHz of bandwidth; the 37-40GHz band supports 3GHz of bandwidth, and a whopping 7GHz of bandwidth is supported on 64-71GHz in an unlicensed band. These allocations of spectrum and bandwidth make the 5G service possible.

Millimeter wave link propagation and link budget

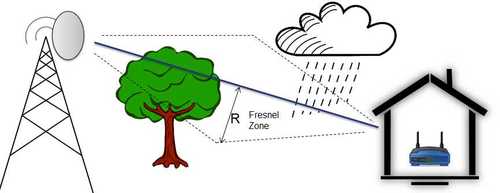

Commercial wireless service frequencies, including WiFi, are below 6GHz. The channel characteristics of these bands are well understood with many design tools available to use. But deploying mmWave frequency bands to provide a link between UE (device) and basestation (BS) presents many technical challenges. One of the first things to understand is mmWave path loss properties and build a predictable mathematical model.Figure 2:

To investigate 5G link behaviors, path loss and link budget are two essential elements.

5G link includes Line-of-Sight (LOS) and Non-Line-of-Sight (NLOS) components in the radio propagation environment. LOS is close -- but not exact at above 60GHz -- to free space path loss, whereas NLOS path loss deviates significantly from free space. The typical process is to make a propagation loss measurement at a specific frequency and terrain, and then do a curve fitting to find the loss of exponent n. The combined path loss is proportional to (distance between transmit and receive antenna) ⁿ, where n is the loss of exponent, which can range from 2 to 4.

Point-to-point microwave communications requires a clearance between the propagation path and the nearest obstacles on the ground, which is governed by Fresnel zone theory. If the zone is 60% clear, it is LOS propagation. 5G networks, however, will have much lower antenna height, which could potentially introduce significant propagation blockage.

5G mmWave link budget is quite different from traditional sub-6GHz wireless link budget and can cause extra losses due to rain fade, shadowing loss, foliage, atmosphere absorption, humidity, and Fresnel blockage.

Below is an example calculation of 5G link budget, which, depending on the band and type of cell, could vary.

Received power in dBm = Tx power + Tx antenna gain + Rx antenna gain – path loss – rain fade (est. 2dB/200m) - shadowing loss (20 to 30dB)– foliage loss (10 to 50dB) – atmosphere absorption – terrain /humility -– Fresnel blockage – system margin

Fresnel zone radius (R) = 17.32 x √(d/4f) (d in km, f in GHz)

By examining the above equations, it is obvious that many factors can cripple mmWave links. Link budget is the most important area of focus for any 5G deployment team.

Propagation loss measurements

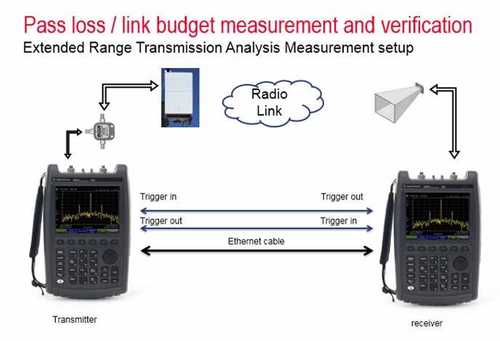

The setup to measure mmWave propagation loss includes a signal generator -- for example 26 to 40GHz -- a spectrum analyzer, and two phased array/horn antennas. The signal generator simulates the basestation, which should be installed at a selected cell site; the signal generator sweeps the bands, from 27.5 to 28.35GHz. The spectrum analyzer measures the received signal level at a defined distance. Because the signal generator and spectrum analyzer are not synchronized, the spectrum analyzer must be able to capture enough samples at given frequency points before the signal generator tunes to the next frequency.There are a few ways to synchronize the signal generator and spectrum analyzer, such as a timer-based trigger, hardware trigger, or simply free run with peak hold at spectrum analyzer. Free run is not a preferred solution because it introduces too many errors, which will affect the accuracy of the propagation model.

To address these measurement challenges, Keysight's FieldFox analyzer has a function called extended range transmission analysis (ERTA). It connects two FieldFox analyzers together; triggers on each instrument synchronize the measurement.

Figure 3:

Deploying 5G on mmWave presents great challenges to RF engineers. It is essential to have a robust channel model for 5G mmWave frequencies. Massive MIMO and beamforming are an integral part of 5G, and early extensive tests are required to make them deployable.

— Rolland Zhang, Senior Product Manager, Keysight Technologies

About the Author(s)

You May Also Like