The second part of our mobile megatest puts Cisco's mobile core offering and its applications services to the test

September 7, 2010

Earlier, Light Reading published the first part of the massive independent test of Cisco Systems Inc. (Nasdaq: CSCO)’s next-generation mobile network infrastructure. (See Testing Cisco's Next-Gen Mobile Network and LR & EANTC Test Cisco’s Mobile IP Network.) Even in a one-minute video montage you can get a sense of the sheer size of this project:

{videoembed|196330}This article is the second feature highlighting the results of this enormous test. Before we get to the background and test setup, here's a hyperlinked table of contents for this special feature:

Page 8: Demo: Voice Over LTE

Page 9: Cisco’s Data Center

Page 13: Results: Data Center Interconnect

Page 14: Conclusion

Background

Our testing team of the European Advanced Networking Test Center AG (EANTC) spent four weeks at Cisco's labs, validating the solutions presented to us. A key component we wanted to see in action is the mobile core. This is the logical data center area where the operator positions all the central packet and voice gateways, auxiliary control plane systems required to run the mobile network, plus consumer and business application servers.

At the beginning of the project late last year, we really scratched our heads when Cisco announced their willingness to join our test. How would they provide all the mobile core equipment? Of course, a search for SGSN on Cisco’s Website resulted in zero hits back then. But when Cisco bought the ASR 5000 (acquired from Starent Networks Inc.) it added a single specialized hardware platform that implements the voice and packet gateway functions both for 3G and Long Term Evolution (LTE).

UMTS voice and packet gateways have served their purpose in the industry quite well in the past years. Our test comes at a moment when things are changing rapidly. The major current challenges of mobile operators include:

Mobile data growth: Mobile subscribers expect multi- Mbit/s (megabits per second) throughput, through high-speed packet access (HSPA). All this traffic needs to transit the serving gateways and packet gateways. Performance requirements are much higher than before, and a large and growing fraction of subscribers has access to smartphones and other end systems capable of high-speed data. (See Cisco: Video to Drive Mobile Data Explosion.)

Migration to LTE, where the mobile core is called Evolved Packet Core (EPC): The drastic changes from 3G to LTE affect base stations, air interfaces, and backhaul networks. The LTE mobile core needs to grow to keep pace with the huge air interface bandwidth extensions, and it needs to support the all-IP radio access network (RAN) by providing suitable application policies, for example to differentiate operator-provided voice over IP and bulk data traffic.

Monetization: The mobile core is the key area where decisions are made to prioritize applications associated with revenue and to throttle bulk applications that just take away frequency spectrum and backhaul capacity (such as P2P, file sharing, etc.). The goal for mobile operators is to avoid too much precious spectrum being taken by applications that do not generate revenue.

This requires that vendors have an idea of what applications could generate revenue – certainly not a trivial question, and not to be answered unanimously across all subscriber bases worldwide. Cisco showed us some of its ideas – see the Mobile Smart Home demonstration on Page 12.

Another note on LTE: How far have implementations matured? The industry has been buzzing with success stories in press releases. But outside four city areas serviced by Telia Company , no LTE network of any scale has started production-grade services yet. Many mobile operators are testing the technology and most operators plan to deploy LTE only after 2012. We checked how well Cisco would be prepared for LTE with their mobile core offerings now. And recall that we tested its LTE backhaul design in the previous testing article. (See Testing Cisco's Next-Gen Mobile Network.)

Test setup

Test setup

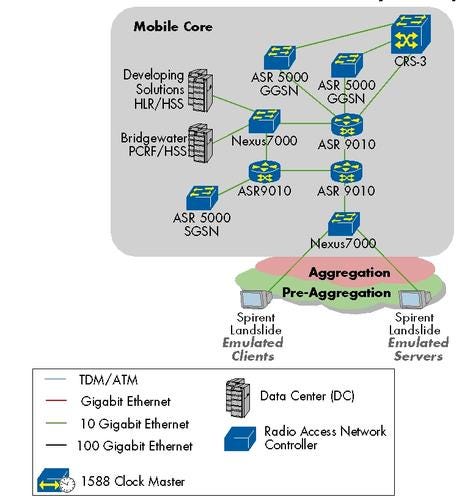

The test scale was phenomenal, even to previous EANTC and Light Reading standards. Our Spirent Communications plc contacts were surprised when they heard our requirement to fully emulate beyond 1 million mobile handsets for voice and data traffic. They had to bring in eleven of their fastest and largest Landslide mobile network emulators. The Landslides emulated the user equipment (UE) – like the cellphones and mobile data cards – and the data aggregation/transport aspects of the emulated base stations.

Due to emulator limitations, we agreed that Spirent would configure 16 artificially large base stations in this test, each of which emulated 62,500 subscribers. We counted only registered and attached subscribers toward this figure.

In real life, only roughly one-in-five to one-in-ten consumer phones is on a voice call at any time. Today, with still a lot of prepaid, voice-only phones around, probably even fewer subscribers are actively exchanging data traffic. A noticeable fraction of customers with a contract or prepaid card might even switch off their phones. Each of these factors would have blown up the numbers – in an artificial, unrealistic way. Therefore we abstained from any of such statistical “improvements” and counted only real, attached subscribers.

The Landslide emulators were connected – through the network set up in the previous phase – to three ASR 5000’s, taking 3G and LTE roles in turn. They were reconfigured from 3G to LTE after the first set of test cases had been completed. Since we required such a large farm of Landslides with 20x10G and 10xGE ports, we ended up using one of the Nexus 7000 switches to aggregate all the tester ports so we could reach the performance we intended to generate for the SGSN, GGSN, and EPC tests. This choice is reflected in the figure above.

For each subscriber, we emulated a range of application flows such as Voice over Internet Protocol (IP), HTTP, plain Transmission Control Protocol (TCP), and UDP data. All flows were IPv4-based in the Landslide/ASR 5000 tests. Since we required such a large farm of landslides with 20x10G and 10xGE ports, we ended up using one of the Nexus 7000 switches to aggregate all the tester ports so we could reach the performance we intended to generate for the SGSN, GGSN, and EPC tests. This choice is reflected in the figure above.

In addition, we required auxiliary mobile core functions to complete the scenario:

The Home Location Registrar (HLR) in the 3G world was provided by an emulator from Developing Solutions, a Texas-based specialist test vendor for mobile core component emulation.

The HLR’s equivalent in the LTE world, called Home Subscriber Server (HSS), was provided by Bridgewater Systems Corp. (Toronto: BWC). In addition, to ease and speed up scalability testing, we used the Developing Solutions emulator for the HSS function in parallel as well. All tests involving an HSS were carried out twice – with the emulator and the production system.

Finally, a Policy Charging and Rules Function (PCRF), also supplied by Bridgewater, was required to interface with the main packet gateway. The PCRF takes decisions on behalf of the packet gateway – anything that requires looking up per-subscriber rule-based behavior. A PCRF configuration can get quite advanced, and could be a competitive differentiator for a mobile operator. Since this equipment was not the major topic of the test, we kept it as trivially configured as possible. Its only purpose was to keep pace with our performance and scalability tests.

In this huge scenario, we evaluated a total of five test cases and two vendor demonstrations. Let’s see the details.

— Carsten Rossenhövel is Managing Director of the European Advanced Networking Test Center AG (EANTC) , an independent test lab in Berlin. EANTC offers vendor-neutral network test facilities for manufacturers, service providers, and enterprises. Carsten is responsible for the design of test methods and applications. He heads EANTC's manufacturer testing,certification group and interoperability test events. Carsten has over 15 years of experience in data networks and testing. His areas of expertise include Multiprotocol Label Switching (MPLS), Carrier Ethernet, Triple Play, and Mobile Backhaul.

Jambi Ganbar, EANTC, managed the project, executed the IP core and data center tests and co-authored the article.

Jonathan Morin, EANTC, created the test plan, supervised the IP RAN and mobile core tests, co-authored the article, and coordinated the internal documentation.

Page 2: Results: ASR 5000 SGSN Attachment Rate

Summary: Cisco’s SGSN scaled up to 1,060,000 emulated 3G subscribers at a steady attachment rate of 4,200 subscribers per second, at the same time communicating with the GGSN to activate PDP contexts at a similar rate.

A Serving GPRS Support Node (SGSN), as its name hints, functions as a bridge between the mobile and the packet world. It deals with mobility aspects such as user attachment and location management as well as logical link management, authentication, and charging. The SGSN is also tasked with the actual delivery of data to and from the users – it is the last device upstream that sees both 3G voice and broadband data traffic in parallel.

Cisco’s ASR 5000 can be configured as a SGSN or a GGSN in a UMTS context (later we discuss its role in a LTE network). For this test the ASR 5000 acted as an SGSN while the Spirent Landslide tester emulated Radio Network Controllers (RNCs) on one side of the SGSN and a GGSN on the other side.

Since the SGSN is the user’s first point of entry into the mobile core network it must also be able to validate, against a Home Location Register (HLR), user’s subscriber data such as user profile and location or serving area (see the bottom of this page for more information).

Cisco’s claim, which we were asked to validate, was that the ASR 5000 could support 4,200 attachments per second and scale up to over a million attachments. These attachments represent users that are looking to access network resources. The test logic was, therefore, to set up a constant rate of 4,200 attachments per second until 1,060,000 users attached to the network. Each successful attachment was followed by a PDP context activation.

The relationship, in the mobile core network, between SGSN and GGSN is such that a number of SGSNs all connect to a single GGSN. Hence the attachment rate that a single SGSN must support is smaller than the PDP activation rate that should be supported by the GGSN. For this reason, in this test we focused on 4,200 attachments per second while in the GGSN test we verified a higher number of PDP context activations per second.

We tested the SGSN in the mobile core environment set up earlier. As each of the 1,060,000 emulated subscribers connected to the network, at a rate of 4,200 per second, the SGSN obtained the information necessary to authenticate them from the HLR. After a successful authentication, the SGSN informed the HLR as to the registered location of the subscriber and the HLR in turn pushed the subscriber's subscription profile to the SGSN. Cisco’s SGSN successfully supported this number of attachments per second and scale.

As each of the 1,060,000 emulated subscribers connected to the network, at a rate of 4,200 per second, the SGSN obtained the information necessary to authenticate them from the HLR. After a successful authentication, the SGSN informed the HLR as to the registered location of the subscriber and the HLR in turn pushed the subscriber's subscription profile to the SGSN. Cisco’s SGSN successfully supported this number of attachments per second and scale. So what is the message to the mobile service providers? The newly minted Cisco SGSN seems to be a serious workhorse. The number of activations we were able to validate in this test show that a ratio of four SGSNs to a single GGSN could be used in network planning, allowing the SGSN to aggregate a larger than normal service area and hence reduce operating cost.

So what is the message to the mobile service providers? The newly minted Cisco SGSN seems to be a serious workhorse. The number of activations we were able to validate in this test show that a ratio of four SGSNs to a single GGSN could be used in network planning, allowing the SGSN to aggregate a larger than normal service area and hence reduce operating cost.

Emulating the home location register

In order to circumvent an involuntary scalability test of the HLR, Developing Solutions graciously provided the HLR emulator and supported the test, connecting the HLR to the SGSN (Gr Interface). As no network-initiated services were tested, the GGSN was not connected to the HLR (Gc Interface).

Here is a little background on the HLR’s functions in the mobile core: It is the central repository, within a subscriber’s home network, that contains the profile for every mobile subscriber authorized to use the mobile network. Included in this profile are the subscriber's unique identifiers (e.g., phone number), authentication material, types of services the subscriber subscribed to, and the current location of the subscriber. The HLR is a common point in the mobile network that determines the type of services the subscriber may access (e.g. roaming, data, or SMS) or to locate the subscriber (e.g. incoming calls for a roaming subscriber).

Page 3: Results: ASR 5000 GGSN Session Setup Rate & Capacity

Summary: In a GGSN role, the ASR 5000 was able to maintain a total of 1 million attached subscribers. It forwarded a total of 20 Gbit/s across 380,000 of these emulated subscribers without any packet or session loss. 18,000 subscriber contexts were activated per second. The results confirm very robust scalability of the ASR 5000 in a 3G, GGSN role.

In our test, Cisco’s ASR 5000 played the roles of GGSN, SGSN, P-GW, S-GW, and MME one by one. This multi-role function should be an operational advantage to streamline requirements to size, power, management, and serviceability. At least it was in our test – when one of the ASR 5000 cards’ hardware failed, the Cisco support team could quickly pull a spare module from the joint pool. In this test the focus was on the ASR 5000’s role as a Gateway GPRS Support Node (GGSN).

The GGSN is a crucial element in the Mobile Core infrastructure. It serves as the gatekeeper to the IP world and is, in many ways, a VPN router. Managing GTP (GPRS Tunneling Protocol) tunnels to each connected mobile terminal, it sets up and tears down packet data protocol (PDP) contexts, extracts tunneled IP data arriving from the SGSN to routed IP and routes Internet traffic into the appropriate contexts on the return path. The GGSN has to manage subscribers and their IP addresses as well as enforce policies.

We started off by performing essential baseline tests, aiming to answer the questions:

How many sessions can the GGSN activate per second? In other words, how large a cloud of active mobile broadband data users can an operator sensibly manage using a single Cisco ASR 5000?

How many mobile users will be supported simultaneously – i.e. what is a suitable operational ceiling for the number of mobile terminals under supervision of a single GGSN?

How much data can the GGSN process? In the high-speed packet access (HSPA) times, an operator selling multi-megabit downlink speeds to their customer base should have accountable planning numbers in their hands.

The test logic had to follow the order of questions above: First users have to be authenticated and activated by the system; once they have been admitted to the mobile network and are allowed to use the data services, they can send and receive data. This meant that within one test we aimed to test both the control plane and data plane capabilities of the ASR 5000.

The test was set up such that a couple of Spirent Landslides emulated a total of four Serving Gateway Support Nodes (SGSN) on one side of the GGSN user test. (We did not use real ASR 5000 SGSNs because this would have required more SGSN hardware to scale than we had available.) Behind those SGSNs, Landslide emulated the appropriate control protocols to represent RNCs, 16 base stations, and 1,060,000 subscribers, which were split up:

380,000 sessions actively sending data all the time

620,000 sessions attached and idle

60,000 sessions in a make/break scenario, set up and disconnected at a rate of 1,000 sessions per second with a lifetime of one minute each.

The parameters were developed by EANTC based on discussions with Heavy Reading analysts and service providers. Since it is only logical that not all attached users are going to be using data services at the same time, we defined 38 percent of the users as actively sending and receiving.

On the upstream side of the GGSN, the Landslide emulated Internet servers and hosts (for example, Web servers). We started the test by activating all the 1,000,000 permanently active PDP contexts. We configured the farm of Spirent Landslides to activate a total of 18,000 PDPs per second and started the test. The ASR 5000 was able to support this rate and scale up to our configured number of users, 1 million. Once the number of expected PDP contexts was reached, the Landslide started sending data traffic for the predefined 38 percent fraction of the users.

At this stage, we started the make/break sessions with the additional pool of 60,000 users that were making new data calls and terminating them after 60 seconds. Context activations/deactivations are commonly seen in mobile networks; they increased the realism and challenge of the test. Our active users were configured with an array of representative applications. These included HTTP and VoIP, as well as plain TCP and UDP. The total amount of data traffic that the ASR 5000 had to process was 20.086 Gbit/s. The traffic was roughly broken down to 6 Gbit/s being sent from the users and 14 Gbit/s being sent to the users. We let the traffic flow for 15 minutes while cycling through our make/break users. We also monitored the CPU utilization on the ASR 5000 and recorded at most 69 percent at any time.

Our active users were configured with an array of representative applications. These included HTTP and VoIP, as well as plain TCP and UDP. The total amount of data traffic that the ASR 5000 had to process was 20.086 Gbit/s. The traffic was roughly broken down to 6 Gbit/s being sent from the users and 14 Gbit/s being sent to the users. We let the traffic flow for 15 minutes while cycling through our make/break users. We also monitored the CPU utilization on the ASR 5000 and recorded at most 69 percent at any time.

Through the duration of the test we did not lose any user session. The ASR 5000 was able to maintain all user sessions while processing a total of 20 Gbit/s worth of data traffic and performing 1,000 data call activations and terminations per second.

Since GGSNs tend to be cost intensive, service providers have a vested interest in minimizing their numbers. The test results validated Cisco’s claims that the ASR 5000 is a robust and scalable GGSN.

Page 4: Results: ASR 5000 GGSN Performance With DPI

Summary: In a GGSN role, the Cisco ASR 5000, configured with a Cisco-selected list of 900 HTTP, 99 TCP/UDP stateful filter rules plus one BitTorrent filter rule, achieved the exact same 20 Gbit/s throughput performance with 1 million active subscribers as in the previous GGSN test.

Managing consumer traffic at the application level, being able to prioritize revenue-creating applications and to forward less-favored traffic in a best-effort way has long been a goal of fixed network service providers. The underlying functions are called Deep Packet Inspection (DPI) and traffic conditioning. In the fixed network, specialized content filters have been on the market for a few years and have been tested for performance extensively.

While bulk traffic on flat rates, for example from file sharing (BitTorrent, Rapidshare, Usenet...) or free video streaming (YouTube, etc.) is a nuisance to DSL and cable providers, it is a life-threatening concern for any mobile operator offering high-speed packet access to PCs through dongles or tethering. The most important bandwidth bottleneck in a mobile network is frequency spectrum. It cannot be extended easily and is shared among users. Mobile operators have to manage the spectrum carefully – through content filtering. Nobody likes to admit it because of the dangling discussion of net neutrality, but content filtering is a matter of fact in most mobile networks.

In light of this challenge, vendors progress their solutions. Typically, content filtering has been conducted by specialized, separate devices in the mobile core. Carriers favor integrated solutions, however, for ease of management and maintenance. It comes in handy that Cisco’s ASR 5000 claimed to implement DPI internally. While we did not have time to validate all the intestines of the filtering implementation in this test, we wanted to trial DPI performance and functionality at least in one test case.

The question in DPI tests is mainly how many rules are there, of what kind, and to what detail. Cisco made it abundantly clear that the DPI function is specifically and particularly optimized for the scenario that they said their customers see most often. Consequently, Cisco's ASR 5000 team turned down our initial set of 1,000 filtering rules -- many of which were Layer 4 stateful packet inspection (SPI) based - although the system was supposed to handle it according to the manuals. The scenario Cisco suggested involved 900 Layer 7 HTTP rules with short prefixes and 95 Layer 4 SPI rules based on the TCP/UDP header. Cisco’s statement was that in this specific scenario for which the device was designed, we could expect the same performance as in our previous GGSN performance test. Declining the lab’s preferred configuration is a rather unusual move for a vendor in an independent test, but the request sounded reasonable after all. Actually, none of the 995 first rules in either model were ever to be hit by any traffic. Their reason of existence was just to create a lookup challenge, before the traffic would match one of the final five good rules in the configuration:

Actually, none of the 995 first rules in either model were ever to be hit by any traffic. Their reason of existence was just to create a lookup challenge, before the traffic would match one of the final five good rules in the configuration:

Voice over IP traffic defined as IP/UDP with source and destination port 4900

Peer-to-peer dynamic BitTorrent traffic (full BitTorrent protocol detection not based on any port numbers but just on deep packet content)

HTTP URL matching an 87-character-long prefix (there was another HTTP URL rule not to be matched, which differed only in the 87th character from this one)

IP/TCP traffic defined with one source port and a different destination port

IP/UDP traffic defined with one source port and a different destination port

As a side note, the ASR 5000 product documentation (version 10.0) lists support for detection of over 64 peer-to-peer and streaming protocols, including the classics (BitTorrent, eDonkey, Gnutella), voice over IP protocols (Skype, Google Talk, Skinny), games (for example World of Warcraft, Xbox, SecondLife), video streaming (Slingbox etc.), and chat protocols (Fring, Yahoo, MSN...). The operator can not only block the traffic, but also police the bandwidth (limiting VoIP quality to be worse than operator voice service quality, for example – hard to pinpoint for subscribers). We tested only BitTorrent blocking in the available time.

Once the rules were configured on the ASR 5000, and the Landslide emulator set up properly, we started a run similar to before, accumulating to a total of 1,000,000 stable users, pulling 20 Gbit/s of traffic and with 60,000 “make/break” calls in parallel. The only difference was that we reduced the activation rate to 5,000 activations per second because this was not meant to be an activation performance test.

Trivial policies for two of the good rules were installed into a PCRF system supplied by Bridgewater (BitTorrent and http). Once the PCRF had manually cached these two rules for all 1 million users, the PCRF was able to keep up with the load and attachment rate of our test. There was nothing particularly complex about the PCRF configuration – no charging or policy attributes at all – however, the PCRF added a bit of context and realism.

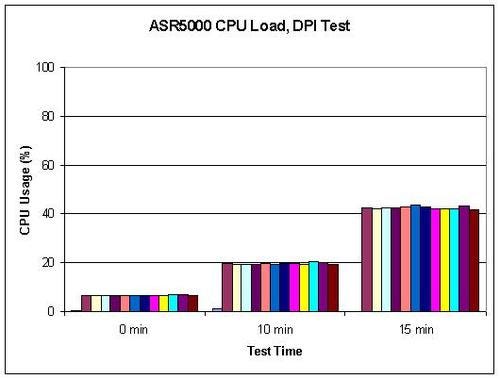

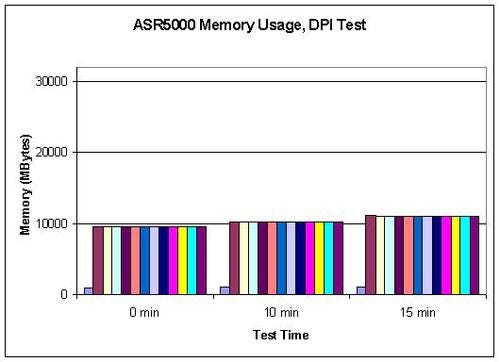

The ASR 5000 mastered this configuration without any noticeable issues. Sessions were activated, maintained and packets forwarded under emulated load exactly as in the previous test without DPI. We observed both the CPU and memory usage and the count of which DPI rules were hit on the ASR 5000 – all 13 primary CPUs remained under 44 percent load and used 11 Gbytes (out of 32 Gbytes) of their memory or less over the 15-minute test duration.

In addition, we monitored CPU usage on the cluster of Bridgewater PCRF systems. It had taken the Bridgewater support team two weeks to stage the system. Due to high and variable message traffic rates, the team adjusted the processing threads, buffers, and in-memory caching parameters to achieve lossless responses to all 1 million requests. The Bridgewater team was extremely dedicated and worked days and nights to tune the system for the tests, and while we weren’t able to witness the system working live, Bridgewater did confirm the final results. In the future, in addition to testing the scalability of the Bridgewater solution of up to 1 million users for policy requests, we’d want to stress test the PCRF with realistic policies and charging rules, especially those related to advanced video and data functions, as well as test for carrier-grade availability and resiliency.

In addition, we monitored CPU usage on the cluster of Bridgewater PCRF systems. It had taken the Bridgewater support team two weeks to stage the system. Due to high and variable message traffic rates, the team adjusted the processing threads, buffers, and in-memory caching parameters to achieve lossless responses to all 1 million requests. The Bridgewater team was extremely dedicated and worked days and nights to tune the system for the tests, and while we weren’t able to witness the system working live, Bridgewater did confirm the final results. In the future, in addition to testing the scalability of the Bridgewater solution of up to 1 million users for policy requests, we’d want to stress test the PCRF with realistic policies and charging rules, especially those related to advanced video and data functions, as well as test for carrier-grade availability and resiliency.

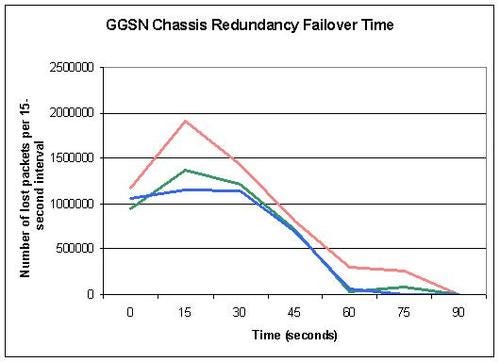

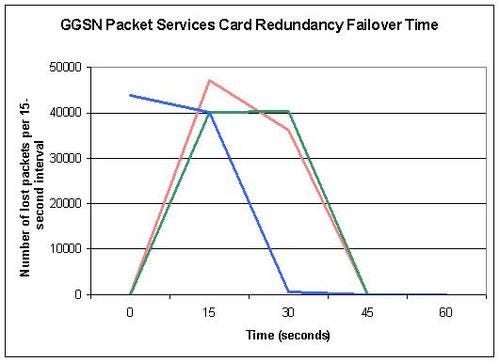

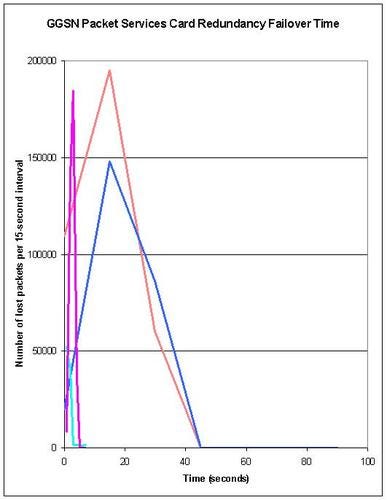

Page 5: Results: Cisco ASR 5000 GGSN Redundancy

Summary: The ASR5000, working as a GGSN, did not lose any out of 1 million active sessions during hard node failure (chassis power-off), packet services card failure, and system processor card failure. Data paths were restored within less than 90 seconds (chassis failure) and 45 seconds (component failure).

In competitive markets, highly available networks and services are important assets for carriers. It is when these services fail that consumers suddenly notice how much they depend on their mobile phones, text messages, and ubiquitous Internet access. In such cases, consumers develop a collective memory: Network outages are not forgotten, and mobile users are willing to switch to a new service provider if they experience even a few annoyances during their service contract.

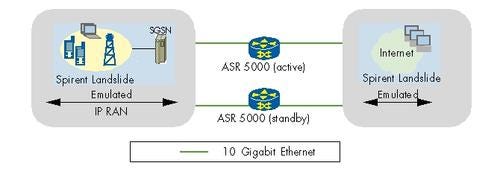

With the ASR 5000 being positioned as a very large, central system in Cisco’s mobile IP network evaluated in this project, it would be inexcusable for the ASR 5000 to fail for an extended amount of time. Our test of redundancy mechanisms focused the GGSN role of the ASR 5000. Cisco claimed zero session loss in the case of three types of hardware failure:

Node failure: Two ASR 5000 GGSNs were configured in a 1:1 configuration in which one would constantly copy the state of the other. The backup device remains idle (unused) until the primary fails.

Packet Services Card (SPC): Different from link failure, the ASR 5000 has a separate card for the interface intelligence, and as any piece of hardware, it could potentially fail, for which reason an extra one was installed into the chassis as a hot standby. This resource can be used, redirected from the separate piece of the network card where the cable is plugged in, regardless of its slot location.

System Processor Card (SPC): Central control module for the chassis.

For the purpose of this test, we re-designated one of the ASR 5000’s available as a secondary GGSN. This system was present in this function only for the redundancy test case. In order to fail the node, we powered off the device after 1 million sessions were activated and traffic from 380,000 sessions was running. We did the same for the cards; however they were simply removed from the device. In the case of chassis failure, we failed the active node, measured failover time, and waited until everything was stable, then repeated the test failing the second node, which was now active, and finally failed the originally active node again for a total of three tests.

In order to fail the node, we powered off the device after 1 million sessions were activated and traffic from 380,000 sessions was running. We did the same for the cards; however they were simply removed from the device. In the case of chassis failure, we failed the active node, measured failover time, and waited until everything was stable, then repeated the test failing the second node, which was now active, and finally failed the originally active node again for a total of three tests.

The results showed:

For chassis failure, zero session loss was achieved in all runs, and sessions did not have to be reattached. This is important to keep the control plane traffic manageable in case of a failover.

We also noticed that the application data flow on the TCP layer recovered in less than 90 seconds – not bad given the huge scale of the test. (Measured on TCP layer since the Spirent Landslide uses stateful traffic and does not provide access to lower layer statistics. Also, the Landslide statistics were bulk results not sorted by session, and available only in 15-second intervals, which is why we cannot show more precise results.)

For the Packet Services Card failure, zero session loss was achieved again, and the system recovered from packet loss within less than sixty seconds across all three runs. The faster recovery and much smaller scale of packet loss seemed natural since just a single component failed – much less challenging than failing over to a secondary chassis as a whole, and not affecting all sessions anyway.

Finally, the initial System Processor Card failure results were a surprise to all: The system did not recover from packet loss even after a number of minutes. Cisco investigated the issue, noticed that the card had locked up after receiving a small number of malformed packets from Landslide, and provided a bug fix.

When the test was rerun, the system processor cards recovered from a hardware failure as well as the other cards. Since the rerun was taken remotely, it was not clear whether a special latch on the card – initiating a graceful maintenance shutdown - had been pushed before it had been pulled from the system.

The three iterations showed the card did not lose any sessions either. It recovered from the vast majority of packet loss within less than 45 seconds. In two test runs, there was very minor trailing packet loss of one packet per second or less for up to four minutes after the failover.

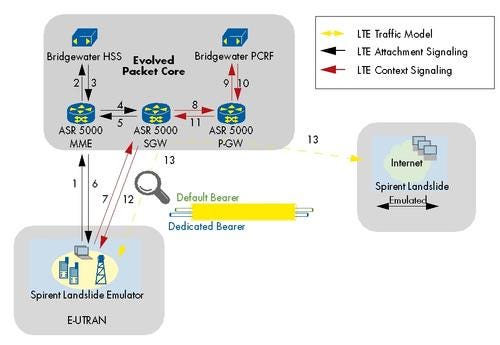

Page 6: Results: Evolved Packet Core Performance

Page 6: Results: Evolved Packet Core Performance

Summary: Cisco’s ASR 5000, configured for the three Evolved Packet Core roles in an LTE network, scaled up to 1 million emulated subscribers and 20 Gbit/s throughput, including differentiated quality for voice and data services.

The goal of this test was to evaluate Cisco ASR5000’s quality and performance in a Long Term Evolution (LTE) scenario.

Long Term Evolution – what a modest name for a new technology that has made manufacturers and operators shiver in excitement for the last two years already – either looking forward to the vast innovation or worried about the huge deployment efforts and cost. The 3rd Generation Partnership Project (3GPP) has evolved mobile network standards since the invention of UMTS (3G). The Release 8 standards family, frozen in December 2008, was the first one to be called LTE. Included in Release 8 are standards for the supporting central (wired) infrastructure services, the Evolved Packet Core (EPC).

In LTE, the entire network shall be IP packet based, end to end – a relief from the burden of backhauling legacy ATM and TDM in earlier UMTS versions until Release 6, and something Cisco is naturally comfortable with. One half of Cisco’s IP RAN network design tested in the first part of this article was an “all-IP” infrastructure consisting of ASR 1000 and ASR 9000s that do not support ATM pseudowires and TDM circuit emulation anymore.

The main functions in the evolved packet core are the Mobility Management Entity (MME), Serving Gateway (SGW), and Packet Gateway (PGW). The role of the SGW somewhat correlates to the SGSN; the PGW is, to some extent, equivalent to the GGSN. For more details about LTE’s evolved packet core, please refer to external documentation.

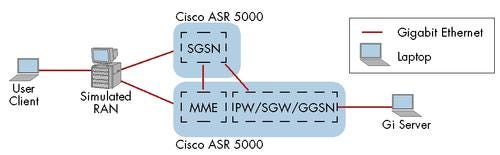

Once we had completed the 3G testing, Cisco rolled over their configurations to turn the three ASR 5000s into an MME, SGW, and PGW respectively. The attachment flow and logical test setup are depicted in the diagram below. The Policy Charging and Rules Function (PCRF) – a function existing both in 3G and LTE networks – was configured in a straightforward way, as simple as possible since it was just an auxiliary system to get the test environment going (see the DPI test for more information).

The Policy Charging and Rules Function (PCRF) – a function existing both in 3G and LTE networks – was configured in a straightforward way, as simple as possible since it was just an auxiliary system to get the test environment going (see the DPI test for more information).

Now, here is an undergrad technology question: What does one need in an all-IP, end-to-end multiservice network to ensure voice, video, and data transfer quality? Exactly: Differentiated services with Class of Service (CoS). This is not a big deal in the fixed network – but on the air interface, spectrum needs to be reserved for higher-priority services. Cisco configured two bearers, a “default” one and a “dedicated bearer” in the EPC to steer this spectrum allocation. The dedicated bearer was to be used for carrier voice over IP applications. The use of these bearers was assigned by the PCRF. The configuration here was quite basic, just enough to establish the two bearers per user.

During configuration time, Cisco encountered issues with the two bearers; the problems were identified and fixed within days only (it involved the signaling of new bearer creation). From there on, we were able to run the test with two bearers.

In terms of performance, we configured the Spirent Landslide to reach the same steady numbers as with the GGSN performance tests – 1 million subscribers, using a total of 20 Gbit/s – however this time the attachment rate was reduced to 5,000 users per second per Cisco’s indication that this would be a safe LTE performance goal to expect for the hardware available.

The results were great – all users were successfully attached, at the appropriate rate, and their traffic passed through just fine – orderly split up into the two bearers configured.

Page 7: Demo: 3G to LTE Mobile Internet Handoff

Summary: Cisco’s ASR 5000, configured for the three Evolved Packet Core roles in an LTE network, supported an emulated handover procedure from 3G to LTE for a single data connection, using a Cisco-provided protocol emulator.

In addition to the scalability and performance tests of the mobile core, Cisco wanted us to witness some additional functions of the ASR 5000, one of which is described in this section. We named them demonstrations instead of tests because of the less stringent requirements for execution.

One of the migration challenges with LTE is how to integrate the new infrastructure with the existing 3G network services, without requiring subscribers to switch over/off their terminals manually each time when going from LTE to 3G areas. The LTE standards have indeed considered the importance of this dual-network operation scenario; however vendor support seems to start just now. The handover procedure has actually been defined by the 3GPP in their Technical Specification (TS) 23.401. This demonstration was not conducted using the main test topology; instead we were presented a scenario as shown in the diagram above. A laptop connected directly to Cisco’s “homebrew” simulator requested a video stream from another laptop acting as a server. The simulator was then configured to switch back and forth every two minutes between connecting via the E-UTRAN protocol family, and connecting via GPRS. (No base stations were involved).

This demonstration was not conducted using the main test topology; instead we were presented a scenario as shown in the diagram above. A laptop connected directly to Cisco’s “homebrew” simulator requested a video stream from another laptop acting as a server. The simulator was then configured to switch back and forth every two minutes between connecting via the E-UTRAN protocol family, and connecting via GPRS. (No base stations were involved).

We were able to visualize the handover via the “blip” in the video stream, which was only mildly annoying – we had to be reminded that a demonstration was going on thanks to Cisco’s distracting choice of various movie trailers. Additionally the Cisco engineers showed us running logs and statistics on each of the two ASR 5000 devices to prove the emulated handover had really happened between 3G and LTE.

The base station vendors look forward to the day where LTE will have been deployed everywhere, but meanwhile it is good to know that this handover mechanism is functional on the ASR 5000.

Page 8: Demo: Voice Over LTE

Summary: Cisco demonstrated on a functional basis that the ASR 5000 supports mobile core functions for Voice over LTE: Call Session Control Functions (Proxy-CSCF, Serving-CSCF). Effectively a single Cisco hardware platform can drive five functions in the mobile core: MME, SGW, PGW, and the two CSCF functions.

So far, the primary focus for LTE has been to provide mobile broadband data services. Providing voice-over-IP (VOIP) calls through the whole infrastructure is not as trivial as it may seem – the challenge is to support voice services (via SIP) in the mobile core. To this end, a Voice over LTE via Generic Access (VoLGA) industry forum has been formed with the goal to accelerate the development of interoperable implementations.

Cisco offered to demonstrate their progress in voice-over-LTE support on the ASR 5000. The router implements proper Call Session Control Functions (CSCF) functions, Cisco claimed:

The Proxy-CSCF, which, as any proxy, provides security

The Serving-CSCF (the SIP server)

In a simple demo, Cisco connected two PCs running a SIP-based VOIP program each to a different call generator running homegrown software emulating the eNodeBs. Once the call generators establish default bearers to the PGW, we were able to start a SIP call between the two PCs.

The ability to set up a one-box-shop using the ASR 5000 will certainly be an advantage for some mobile providers – specifically in terms of maintenance.

Page 9: Cisco’s Data Center

The Mobile Data Center: Introduction

After we validated almost all the components required to set up the wireline part of a mobile network (excluding base stations, microwave systems and management) it was time to consider useful applications of such a network. After all, the reflex-like requests for “more bandwidth” are a constant push for network expansion, but they are not likely to pay for themselves, or even increase profitability of a network. Almost all mobile network markets worldwide are competitive today, so pricing cannot be easily increased for new technology deployments.

Service providers ask themselves: What revenue-generating services could be offered to mobile subscribers? And how would they be delivered? There is no silver bullet response. Nevertheless, we asked Cisco about their ideas.

Cisco presented their data center components and applications. They reasonably argued that – before even thinking about how to market applications – a mobile operator implementing broadband services over LTE needs to deploy data center infrastructure way more powerful than required today.

Cisco brought their iControl partners and the Wide Area Application Services (WAAS) Mobile to demonstrate potential applications and their implementation in the data center. Supporting Cisco’s claims of a huge increase in traffic, Cisco deployed a generous 100 Gigabit Ethernet link in the data center which we gave a spot check. Cisco also presented some ideas for edge-supported secure mobile business services, which led to an evaluation of functions in Cisco’s ASR 1006.

This is also the second area in this program in which Cisco was permitted to demonstrate solutions, the first one being the Voice over LTE and 3G-to-LTE Mobile Internet Handoff. This word, as used in the article, means that Cisco showed EANTC engineers a functional demo of a feature or solution, but that EANTC verified it just by personal witness and by asking a lot of questions and secured reproducibility by copying configurations and command line interface (CLI) output. For any demonstrations, we did not question the test parameters nor did we test its performance and scalability, or compliance with standards.

Page 10: Results: ASR 1006 Firewall and Network Address Translation Functionality and Scalability

Summary: Cisco demonstrated that the ASR 1006 implements IPv4 Network Address Translation while at the same time performing stateful firewall for HTTP and FTP connections.

Part of the network design that Cisco suggested for the test involved using their ASR 1006 router in the regional data center. The ASR 1006 fulfilled several roles. In this article section we discuss its role as a Network Address Translator and firewall. Both roles, focused at the mobile business subscribers, made a lot of sense from Cisco’s point of view – let the mobile worker/telecommuter work from any coffee shop of her liking while providing her the ability to hide her IP address and protect her connection with some firewall functionality. It is important to note in this context, that mobile workers could easily be attached to a mobile service provider’s 3G or LTE network using a USB stick.

For this test we used Spirent’s Avalanche Layer 4-7 tester to simulate 80,000 subscribers all using private IP space. Our emulated subscribers were opening connections to emulated servers that were using public IP addresses, which meant that the ASR 1006 had to perform IPv4 Network Address Translation (NAT) functionality to facilitate the connectivity. Cisco claimed that the ASR 1006 was powerful enough to handle 200,000 open connections per second for a total of a million connections. This statement was easy to verify.

The Cisco ASR 9010 was set up as an aggregation router using the same topology that has been used for the other tests. The ASR 1006 was attached, using a single 10GbE interface to the ASR 9010. We suppose that we’ll be hard pressed to find a service provider that will be that brave and not provide at least a redundant connection for important business services. Nonetheless, this is the configuration that Cisco suggested for the test and since the number of subscribers using the NAT and Firewall functionality was the focus here we did not object too loudly. To facilitate the test, we used Spirent’s Avalanche HTTP emulator to terminate streams both on the client and server side.

To facilitate the test, we used Spirent’s Avalanche HTTP emulator to terminate streams both on the client and server side.

Emulated subscribers were set up to access 21 emulated Web servers and those who attempted to use FTP were blocked by the Firewall functionality in the ASR 1006. Each subscriber opened multiple HTTP connections such that around 200,000 connections were being opened per second, FTP connections were blocked and at a million connections we reached a steady state for several minutes.

This scenario demonstrated that Cisco’s ASR 1006 is indeed an able NAT/Firewall machine. It was able to support our users and to scale up to 1 million open connections.

Page 11: Results: ASR 1006 Session Border Control Functionality & Scalability

Summary: Cisco demonstrated a sample of fixed-network style SIP Border Session Controller (SBC) functionality for up to 40,000 emulated VOIP customers on a single ASR 1006.

After the Firewall/NAT demonstration, Cisco presented the ASR 1006 for a second demo focusing Session Border Controller functions in a regional data center.

In this demonstration case, the Cisco team reconfigured the router for another function: Session Border Controller (SBC) for voice over IP. In this role the Cisco ASR 1006 was acting as an intermediary between one network and another. The clients in this scenario were emulated by Spirent’s Avalanche and were attempting to start SIP calls through the SBC. The SBC’s role was then to forward the SIP calls to a set of 21 SIP Gateways. The test should have been simple – set up a number of calls and verify that they are accepted and performed. Unfortunately, the ASR 9010 aggregation router used in the test exhibited a limitation that slowed us down. The router’s line card used in the test supported up to 32,000 ARP entries. We gauge this number as sufficient for a backbone router – however not for the data center interface role that Cisco had selected the ASR 9010 for in this test.

The test should have been simple – set up a number of calls and verify that they are accepted and performed. Unfortunately, the ASR 9010 aggregation router used in the test exhibited a limitation that slowed us down. The router’s line card used in the test supported up to 32,000 ARP entries. We gauge this number as sufficient for a backbone router – however not for the data center interface role that Cisco had selected the ASR 9010 for in this test.

We tried to work around the ARP limitation by setting up a virtual router on the Avalanche testers, but also there we encountered an interesting limitation, so we had to make some compromises.

The end scenario meant that we registered almost 40,000 unique phone book entries with the SBC. We then established 39,979 calls at a rate of 231 calls per second using an equal mix of media codec: G.711 Law and G.729. The codecs were suggested by Cisco. The world of LTE voice might become more advanced – it was not entirely clear to us if the call model used by Cisco would be sufficient for mobile operators at the time when such SBCs will be implemented.

The question whether mobile service providers will be keen on servicing their mobile subscribers with a SIP gateway in a regional data center is left to the reader. Cisco confirmed that SBCs have a prominent role for LTE voice support in Cisco’s reference design.

Page 12: Demo: Wide Area Application Services (WAAS) Mobile & Mobile Smart Home Application

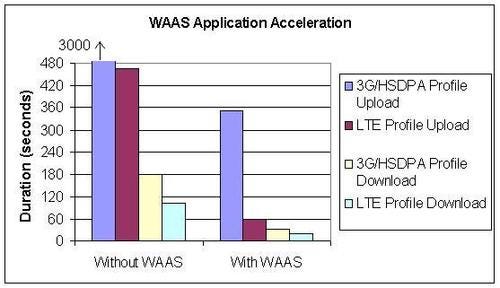

Summary: Cisco demonstrated WAAS Mobile, an application acceleration for mobile workers running on Windows-based PC clients. A non-representative spot check revealed five times download time reduction, seven to eight times upload time reduction through WAAS for an uncompressed Cisco-selected 16-Mbyte Microsoft Word file. In the Mobile Smart Home Application demo, Cisco demonstrated a video-streaming-based home surveillance and management solution from iControl. We witnessed a scalability demonstration in the data center in which 10,000 emulated security panels downloaded surveillance videos over an hour.

Cisco’s Wide Area Application Services (WAAS) Mobile is a client-server-based application acceleration software solution that, as Cisco describes, optimizes the performance of TCP-based applications operating in Wide Area Networks (WAN). WAAS performs byte sequence caching as well as HTTP object caching. This way, only updated content is transmitted, hence reducing the total amount of data that the user needs to download over the radio access network. In addition, the documentation claims that some enterprise protocols (like remote desktop, RDP) are specifically optimized to cope with high-latency, high-packet loss networks and that the remaining transmitted content is compressed.

It took some investigative interviews with Cisco about the inner working of the WAAS solution to reveal that the term “mobile” in its official name is rather a forward-looking statement. At the moment Cisco does not have implementations of WAAS for any mobile operating system (such as Windows CE, Symbian, iPhoneOS, or Google’s Android). It is available only for Windows XP, Windows 7, and Vista – targeted toward mobile workers with laptops rather than smartphones.

Once installed, WAAS intercepts applications that are listed in its process list, terminates the TCP connection (for example to a Web server) locally, and opens a UDP connection to the WAAS server instead. In turn, the server will open a TCP connection to the original destination server. Hence the connection across the WAN is a kind of UDP-based tunnel.

The whole solution looked rather special and proprietary. The overall concept sounds like an evolution of the Opera Mini browser for cellphones released four years ago, whereby a Java mobile phone client and a server work together to accelerate Web access. Opera Mini raised privacy concerns years ago – the WAAS product is said to be compatible with IPSec encryption and encourages the positioning of the WAAS server at a secure point (in the enterprise?) to avoid such concerns. Obviously, the WAAS clients and servers need to see the unencrypted application protocol to take full advantage of application-specific optimization.

The WAAS Mobile application demonstration was conducted in a separate topology. The WAAS Mobile client was installed on a laptop attached with a Gigabit Ethernet link to the network while at the other end of the network, in the data center, the WAAS Mobile server was installed.

To make the demo more realistic, we inserted Spirent’s XGEM impairment generator in line, emulating delay, delay variation, and throughput limitations of a radio access network. Cisco requested the following two profiles:

50-kbit/s upstream and 1-Mbit/s downstream bandwidth – related to 3G high-speed downstream packet access (HSDPA)

320-kbit/s upstream and 4-Mbit/s downstream bandwidth – resembling an LTE situation.

Both profiles had 50ms delay and up to 10ms delay variation.

Cisco showed an upload and download of a 16-Mbyte Microsoft Word 97-2003 formatted file; first without the WAAS client active, later with. Both steps were repeated for the two impairment profiles.

The results confirmed Cisco’s claims for the specific file tested – WAAS Mobile accelerated both download and uploads. Whether the same speed could be reached with smaller Word files (which are more typical), or with other, pre-compressed files such as PDF, remained unclear.

Please see the diagram below for a summary of the recorded transfer times in the demonstration. Mobile Smart Home Application demo

Mobile Smart Home Application demo

In the quest for markets that can benefit from network ubiquity, Cisco has found a new candidate – home security, or the evolved form, home management. Cisco’s partner iControl, produces remote home management applications for consumers – from motion detection-enabled webcams to security alarms and climate control. The question why one would need that – and whether it is desirable to have motion-activated cameras at your home theoretically able to watch your family and neighbors from remote any time – is probably old-fashioned.

Traditionally, other iControl applications have been available over fixed networks given that video streaming takes a substantial amount of bandwidth and that the remote person normally has a job (such as health care adviser, safety and security operator, etc.) that features a wired Internet connection anyway. Mobile connections were merely meant for backup transport.

For the consumer self-surveillance concept, iControl and Cisco joined forces to make video streaming available to mobile phones, specifically the iPhone. High streaming performance in the data center, as well as large bandwidth in the IP core, backhaul, and the radio access networks, is required.

Cisco started off the demonstration by showcasing the iPhone application installed at a Cisco representative’s home. We witnessed video streamed from a webcam on his home’s roof both to a laptop and his iPhone. We can neither deny nor confirm whether his wife was seen holding hands with the gardener at the time. (By the way – consumers signing up for such a service better trust their service provider’s staff and data center security.)

When such videos are streamed, or any other logged events occur, they are stored in a central data center deployed by the operator offering the home management service. IControl’s reference architecture requires network switches in the data center. Cisco obviously claims the ability to provide all components and make things more efficient in terms of rack space and, potentially, other factors such as power.

In the lab was such a setup, Cisco’s virtualized data center – consisting of two Cisco MDS 9124s, two UCS 6120XPs, one Nexus 5000, and a series of servers and disks. On one server Cisco ran SLAMD software emulating users requesting video downloads. In parallel, iControl software emulated a total of 53,004 home security panels. The idea was to emulate a peak time where the maximum number of supported users requested videos from their homes, and to see how many videos could be downloaded. We were told that around 10,000 of the emulated security panels were that of GE’s EXT panel, and roughly 40,000 were of Honeywell Lynx panels. Videos were only downloaded through the emulated GE security panels.

We used a monitor port from a Nexus 7000, which the traffic also traversed in order to capture packets. Finally, we ran the SLAMD software to request video clips lasting for exactly one hour. The emulated users behaved in a way that a transaction – a login, six consecutive video downloads, and a logout – would continue on a loop. The video clip was an MPEG-4 file of 1.078 Megabytes.

At the end of the hour SLAMD reported that 38,413 logins were executed. Each login command was packaged with a video download request, so that also meant 38,413 video downloads, followed then by a total of 192,022 post-login-download requests. This then comes out to be a total of 230,435 video downloads within the one-hour run time – roughly equivalent to a steady 500-Mbit/s downstream throughput at the data center. With this we are able to quantify the kind of user traffic one could expect to support with Cisco’s infrastructure supporting iControl’s home management solution.

Page 13: Results: Data Center Interconnect

Summary: Cisco demonstrated the transfer of 20 Terabytes of data, using maximum jumbo-sized Ethernet frames, at line rate across Nexus 7000 and CRS-3 (the latter equipped with 100-Gigabit Ethernet) in 26 minutes and 40 seconds.

Cisco’s Data Center Interconnect (DCI) System is a solution used to extend transparent Ethernet segments (subnets) beyond the traditional boundaries of a single-site data center. By stretching the network space across two or more data centers, the DCI solution is able to facilitate the enterprise’s server virtualization strategy, support high performance, and provide nonstop access to critical business applications.

In this test, Cisco asked us to verify that Cisco’s data-center-to-data-center interconnect, accomplished by connecting two Nexus 7000s over a pair of 100-Gbit/s attached CRS-3 routers, could transfer very large data sets while enjoying the full benefits of the newly introduced 100GbE interfaces of the CRS-3. Cisco used a back-of-the-envelope calculation to figure out that the library of congress (20 Terabytes of data as the common belief goes) could be transferred, theoretically, in 1,600 seconds from one data center to another. Cisco was so sure of the ability of the solution that they challenged us to execute exactly this test.(The main 100-Gigabit Ethernet tests on the CRS-3 are documented in the first part of the article.)

We attached ten 10-Gbit/s tester interfaces to each one of the two Nexus 7000s and started sending the largest possible Ethernet frame sizes (over this medium: 9216 bytes) from one end to the next, clocking our transfer at 1,600 seconds (26.66 minutes). The question was simply: Had 20 Terabytes been successfully transferred at the end of the test interval?

The test setup emulated 10 file servers on each of the two data centers. Half of them were used to source the traffic and the other half were used to receive the data transfer - much like off-site backups that happen regularly across geographically dispersed data centers.

As a result, the test confirmed Cisco’s claim: The full amount of data was transferred at line rate within the time calculated in advance.

Page 14: Conclusion

Over the course of this massive testing project we've been able to demonstrate and observe many facets of Cisco's mobile network:

Cisco's MWR2941 showing accurate frequency synchronization up to LTE MBMS requirements using a hybrid solution with IEEE1588 (Precision Time Protocol) and Synchronous Ethernet.

Cisco's ASR 9010 and 7609 platforms appropriately classifying and prioritizing five classes of traffic during link congestion.

The CRS-1 Route Processor Card had no BGP session or IP frame loss during our component failover tests.

A live switch fabric upgrade of a CRS-1 router to a CRS-3 under load, enabling 100-Gigabit Ethernet scale. The test resulted in less than 0.1 milliseconds out-of-service time – sometimes even zero packet loss.

Cisco's network recovered after a physical link failure in the IP-RAN backhaul as quickly as 28 milliseconds in the aggregation and 91 milliseconds in the pre-aggregation.

Nearly 120 Gbit/s of lossless traffic across a series of 12 10-Gigabit Ethernet links traversing Cisco's ASR 9010 and Nexus 7000.

Cisco's CRS-3 100-Gigabit Ethernet interfaces achieved throughput of 100 percent full line rate for IPv4 packets of 98 bytes and larger, IPv6 packets of 124 bytes and larger, and for Imix packet size mixes for bothIPv4 and IPv6.

The CRS-1 platform achieve 40 million NAT64 translations between IPv6 and IPv4 addresses and recovery from NAT64 module failure.

Cisco’s ASR 5000, configured as a SGSN, could support 4,200 attachments per second and scale up to over a million attachments. These attachments represent users that are looking to access network resources.

The ASR 5000, acting as a GGSN, survived three different kinds of hardware failure tests and passed with flying colors. Also, 3G installations of the device worked seamlessly when systems were added for LTE support.

Cisco’s ASR 1006 can scale to support up to a million open connections with its NAT and firewall functionality.

All of this is to say that Cisco, indeed, showed it is capable of delivering to operators the parts to build a next-gen mobile network that is scalable and shows state-of-the-art functionality. The mobile core tests of the ASR 5000, acquired from Starent Networks, are particularly noteworthy because they show Cisco's position in the mobile core is rock-solid.

As we noted in the introduction to our first report, it will be up to the carrier to integrate billing, fault management, and all the other functions that make an LTE network functions. But our tests show Cisco can comfortably claim that it has the building blocks for key components in the backhaul, core wireline infrastructure, and mobile core and that it can support all mobile network generations and can scale sufficiently to meet the needs of the world's largest carriers.

— Carsten Rossenhövel, Managing Director; Jambi Ganbar, Project Manager; and Jonathan Morin Senior Test Engineer, European Advanced Networking Test Center AG (EANTC)

Back to Introduction

You May Also Like